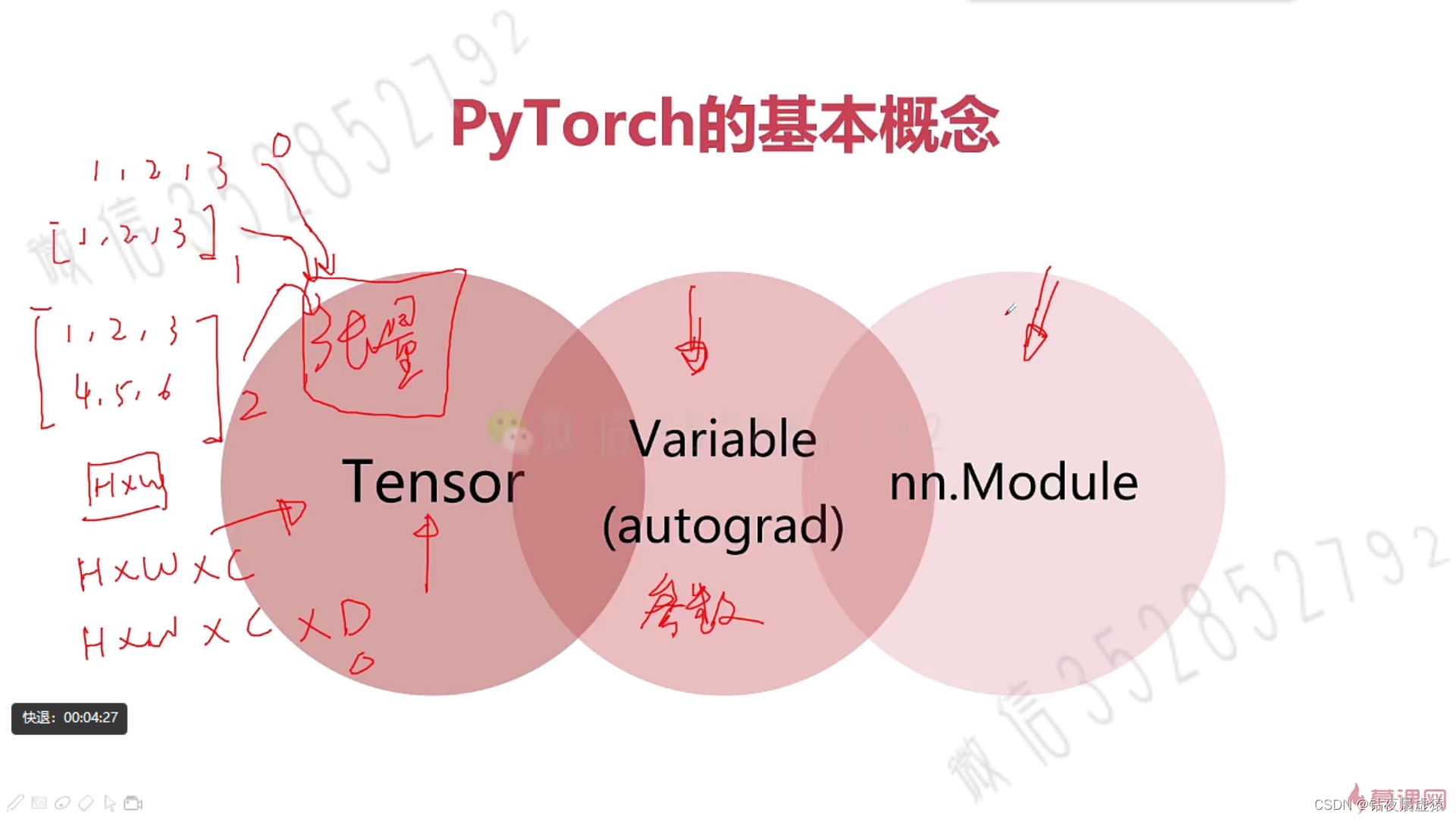

三、PyTorch入门基础

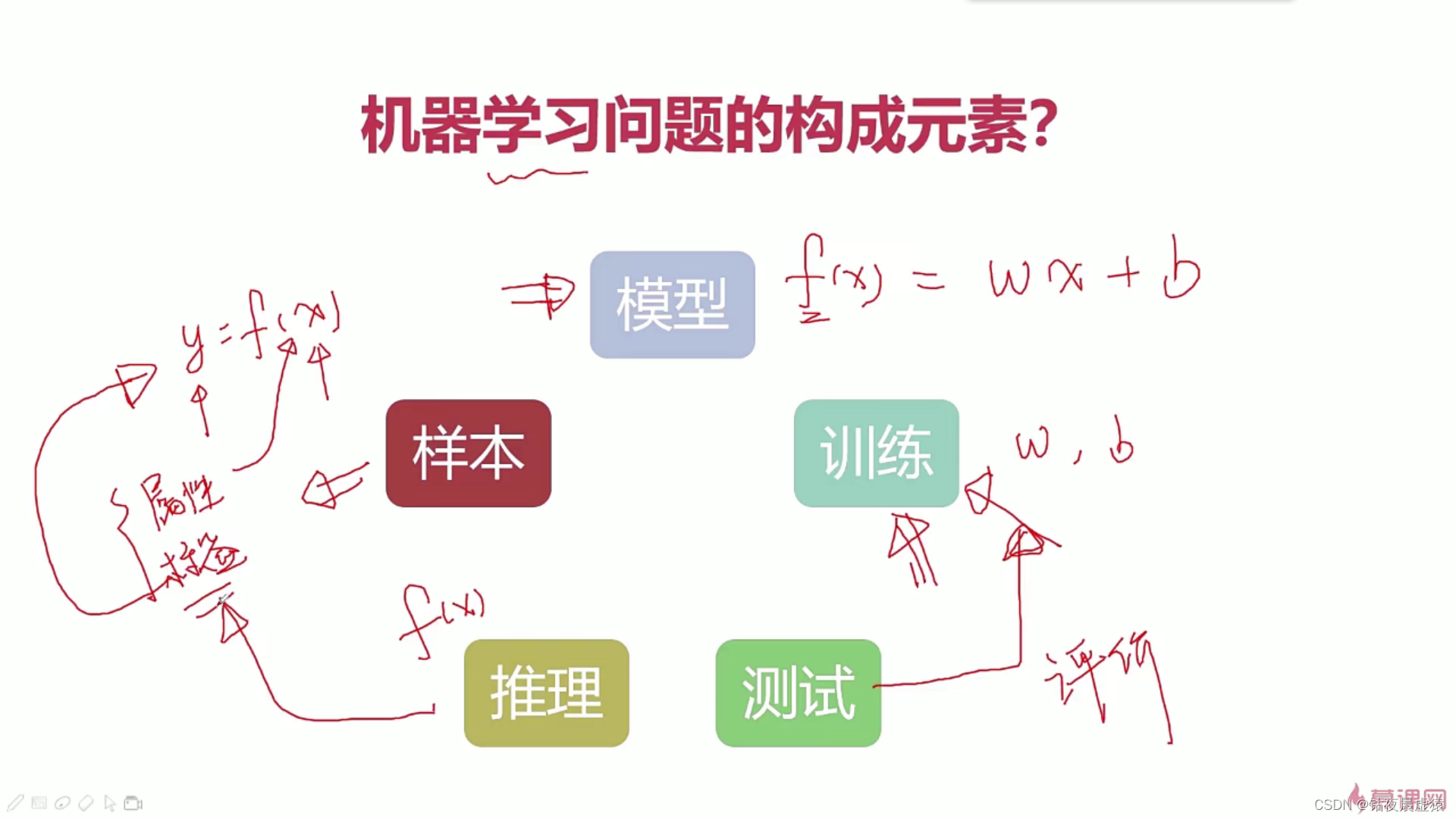

1.机器学习中的分类与回归问题-机器学习基本构成元素

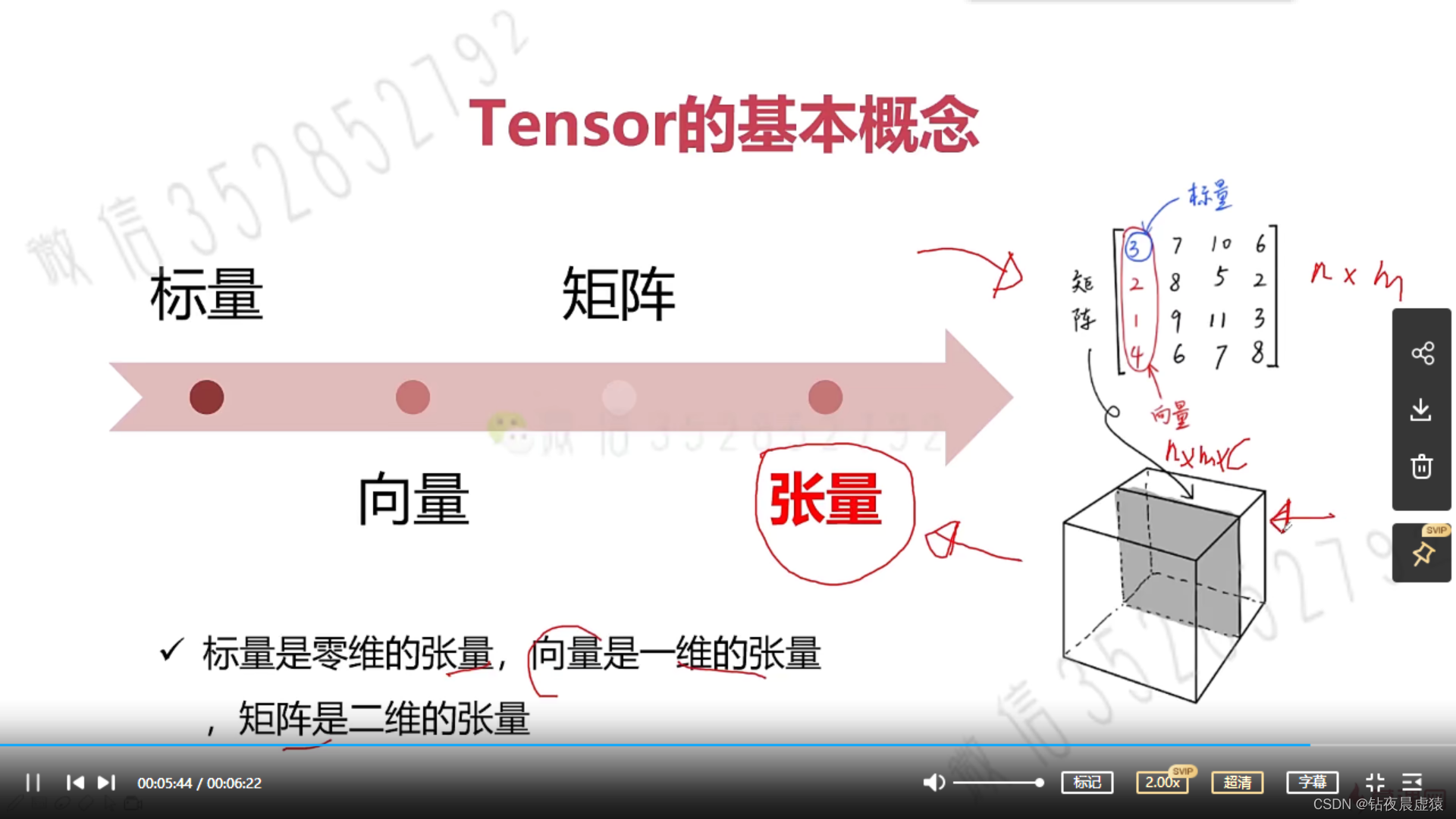

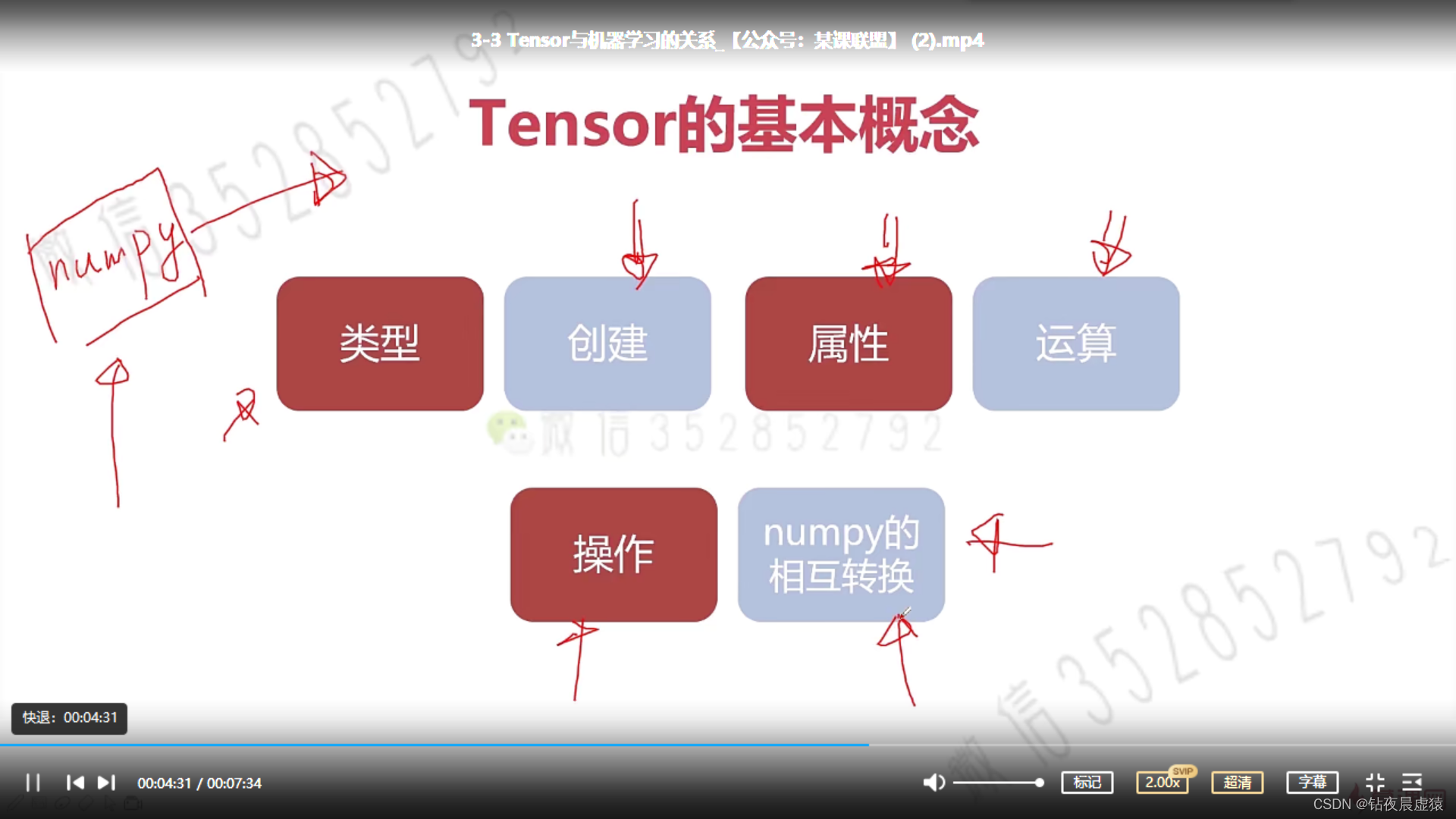

2.Tensor的基本定义

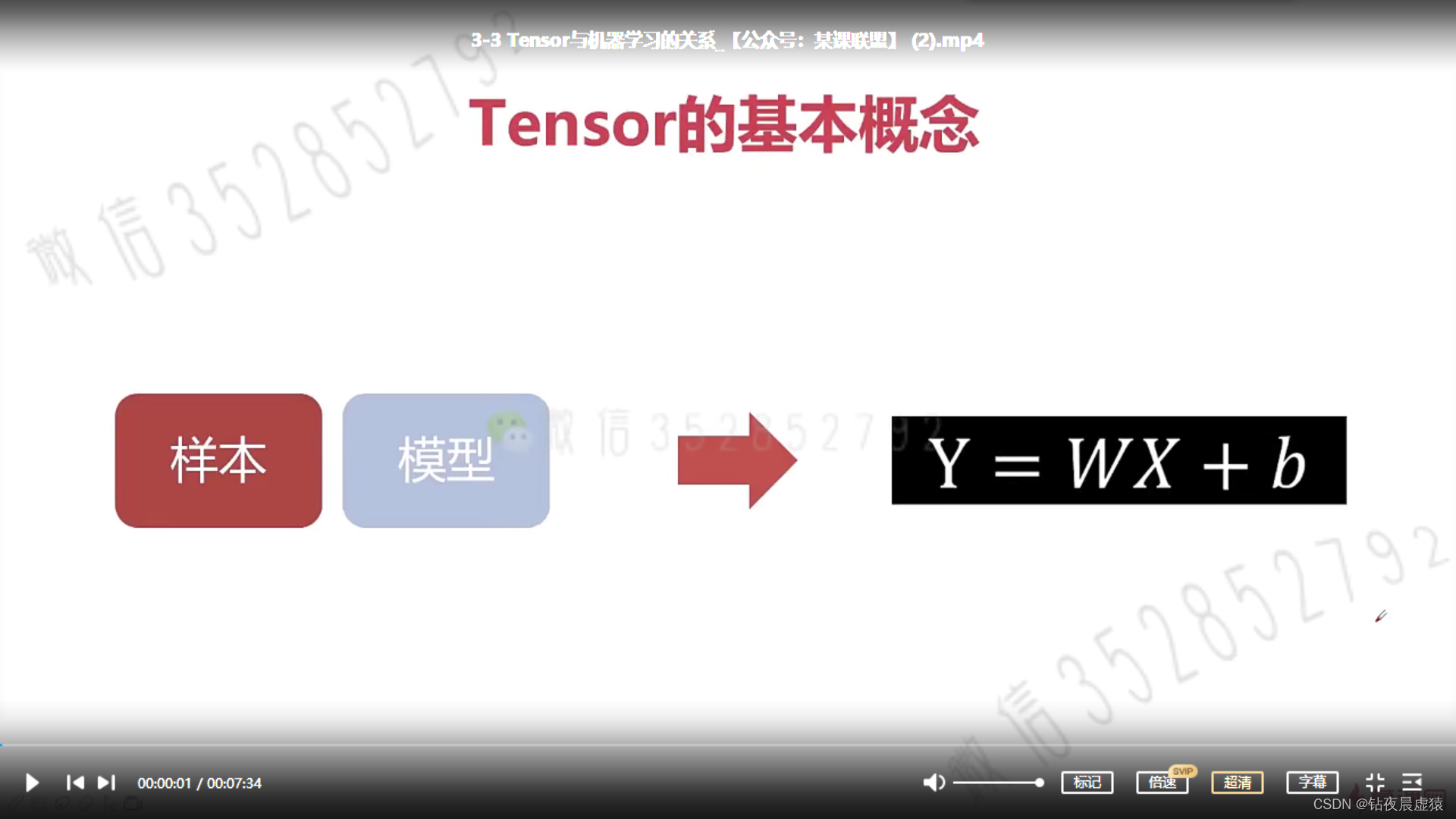

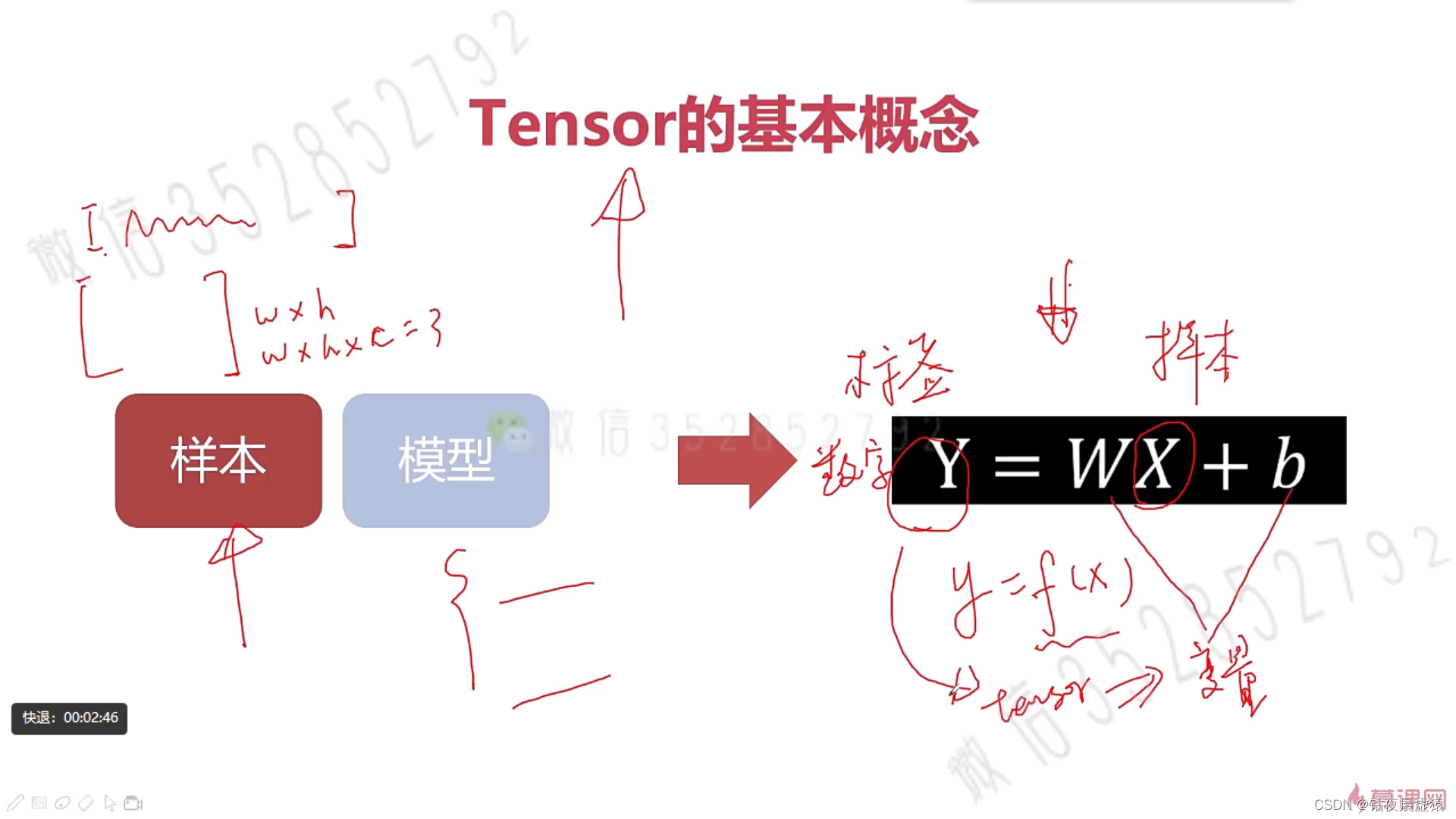

3.Tensor与机器学习的关系

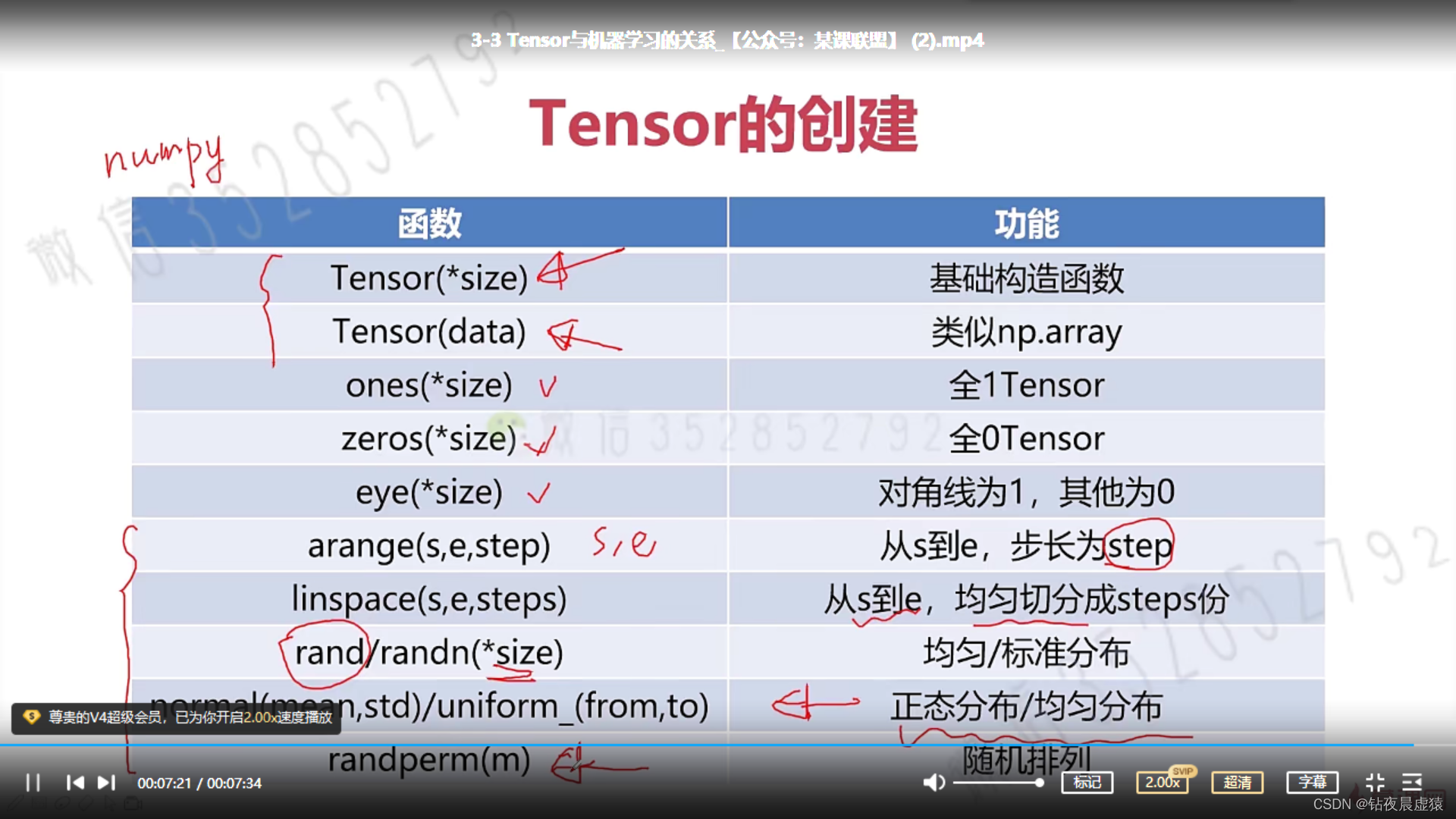

4.Tensor创建编程实例

import torch

a = torch.Tensor([[1, 2], [3, 4]])

print(a)

print(a.type())

a = torch.Tensor(2, 3)

print(a)

print(a.type())

'''几种特殊的Tensor'''

a = torch.eye(2, 2)

print(a)

print(a.type())

b = torch.Tensor(2, 3)

b = torch.zeros_like(b)

b = torch.ones_like(b)

print(b)

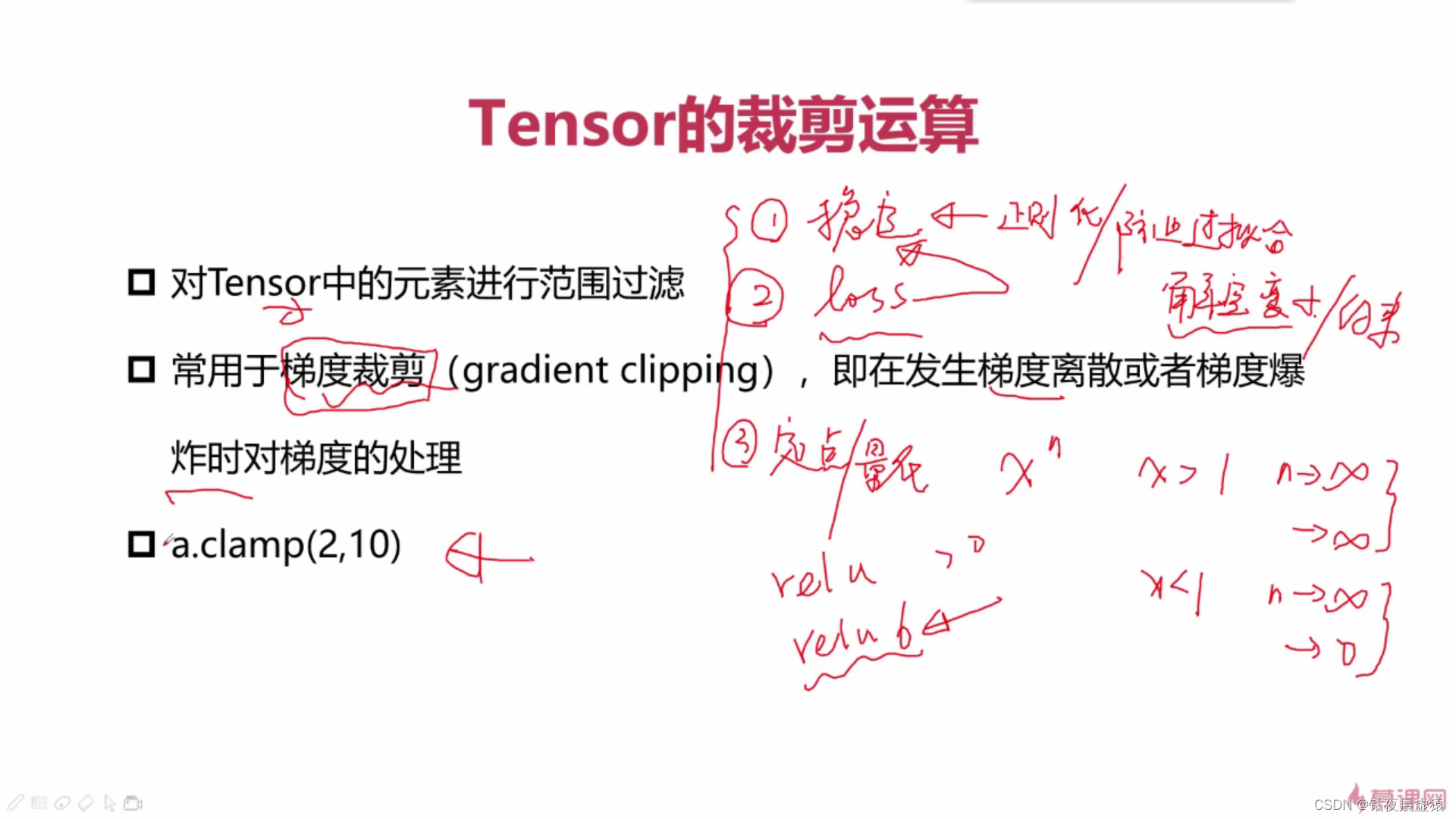

print(b.type())

'''随机'''

a = torch.rand(2, 2)

print(a)

print(a.type())

a = torch.normal(mean=0.0, std=torch.rand(5))

print(a)

print(a.type())

a = torch.normal(mean=torch.rand(5), std=torch.rand(5))

print(a)

print(a.type())

a = torch.Tensor(2, 2).uniform_(-1, 1)

print(a)

print(a.type())

'''序列'''

a = torch.arange(0, 11, 3)

print(a)

a = torch.arange(2, 10, 3)

print(a)

a = torch.linspace(2, 10, 3) # 拿到等间隔的n个数字

print(a)

a = torch.linspace(2, 10, 4)

print(a)

a = torch.randperm(10)

print(a)

import numpy as np

a = np.array([[1, 2], [2, 3]])

print(a)

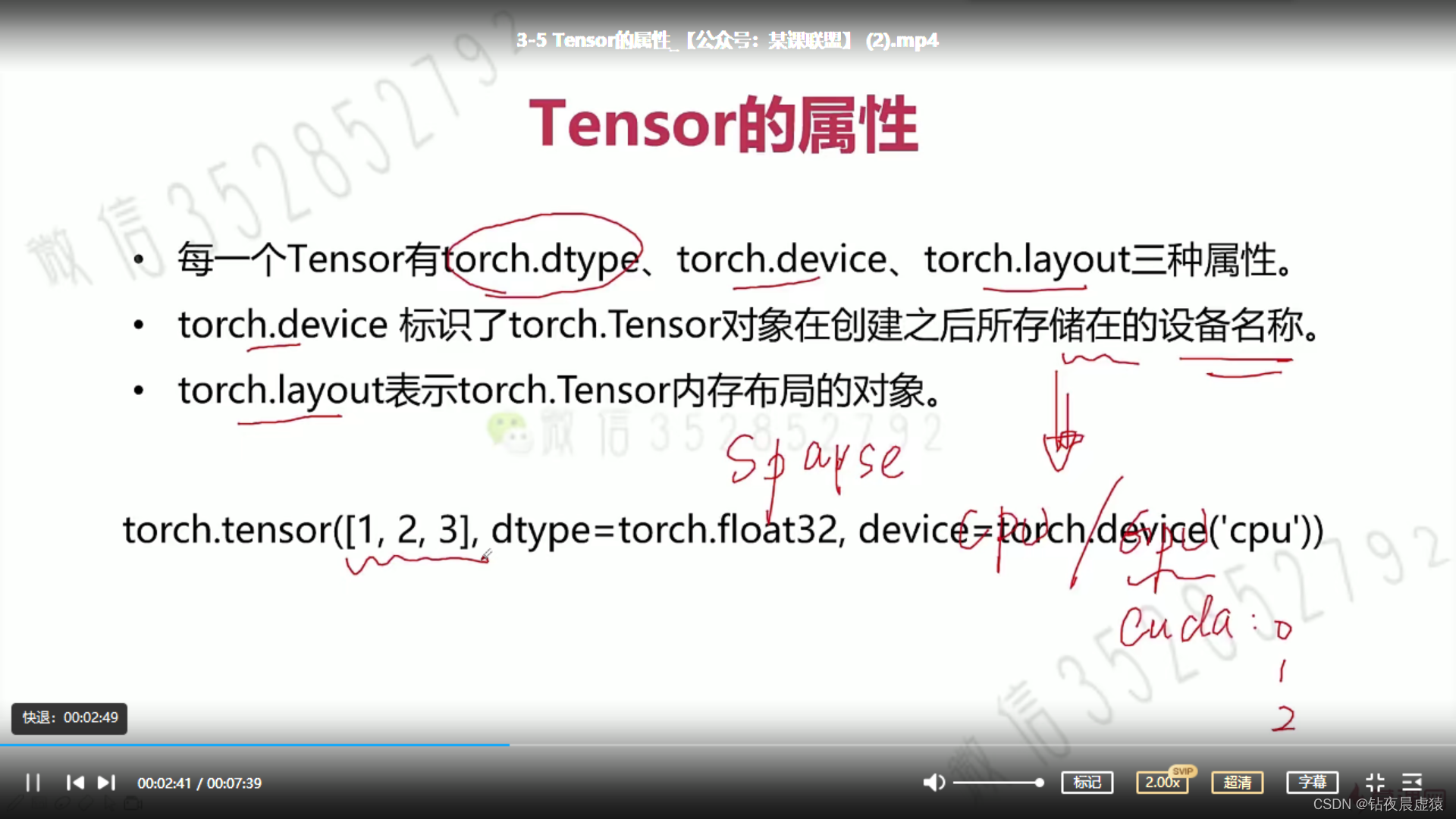

5.Tensor的属性

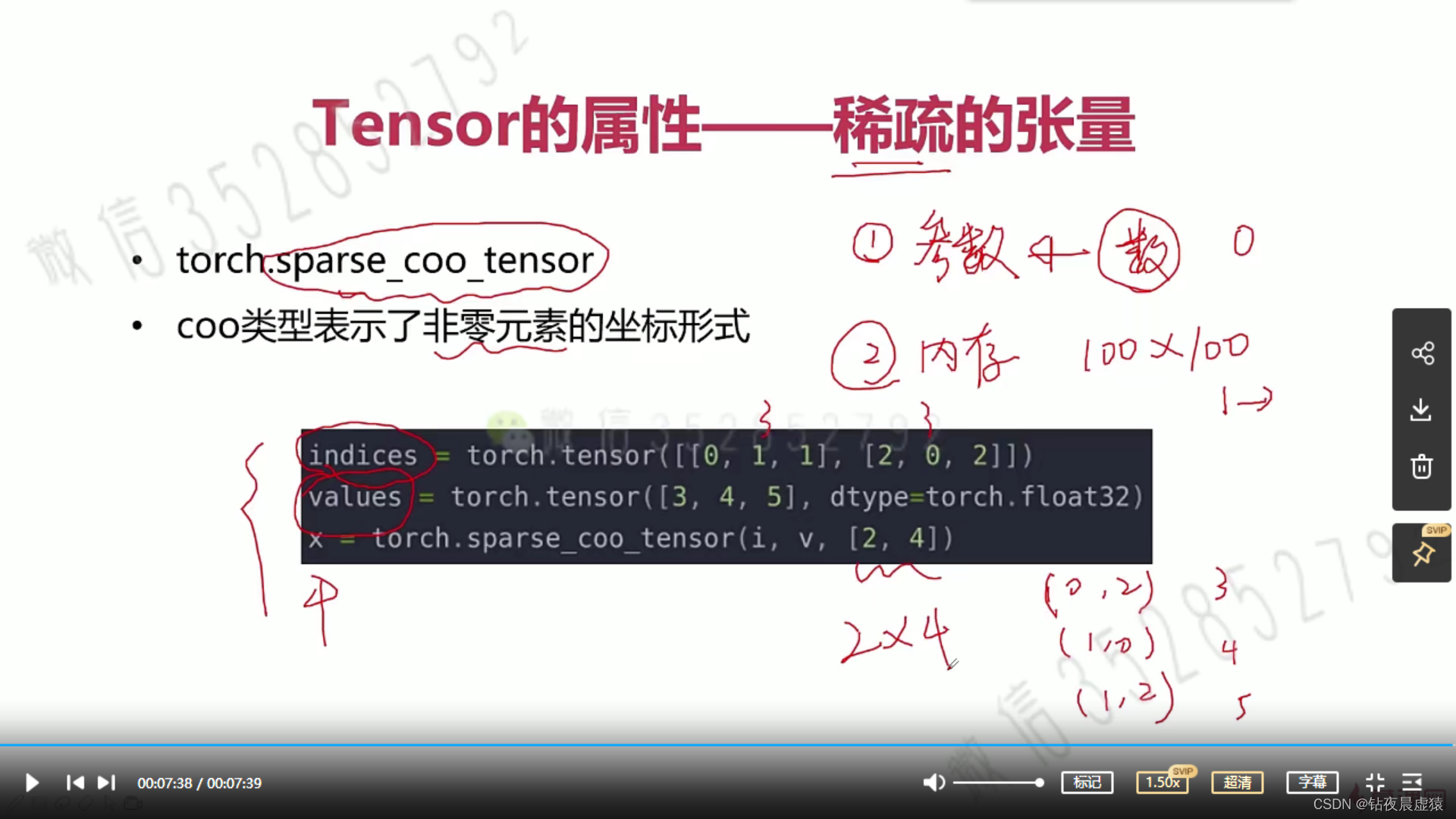

6.Tensor的属性-稀疏的张量的编程实践

import torch

dev = torch.device('cpu')

# dev = torch.device('cuda')

a = torch.tensor([2, 2], dtype=torch.float32, device=dev)

print(a)

# 将对角线设置为非零元素

i = torch.tensor([[0, 1, 2], [0, 1, 2]])

v = torch.tensor([1, 2, 3])

a = torch.sparse_coo_tensor(i, v, (4, 4), dtype=torch.float32, device=dev).to_dense()

print(a)

7.Tensor的算术运算

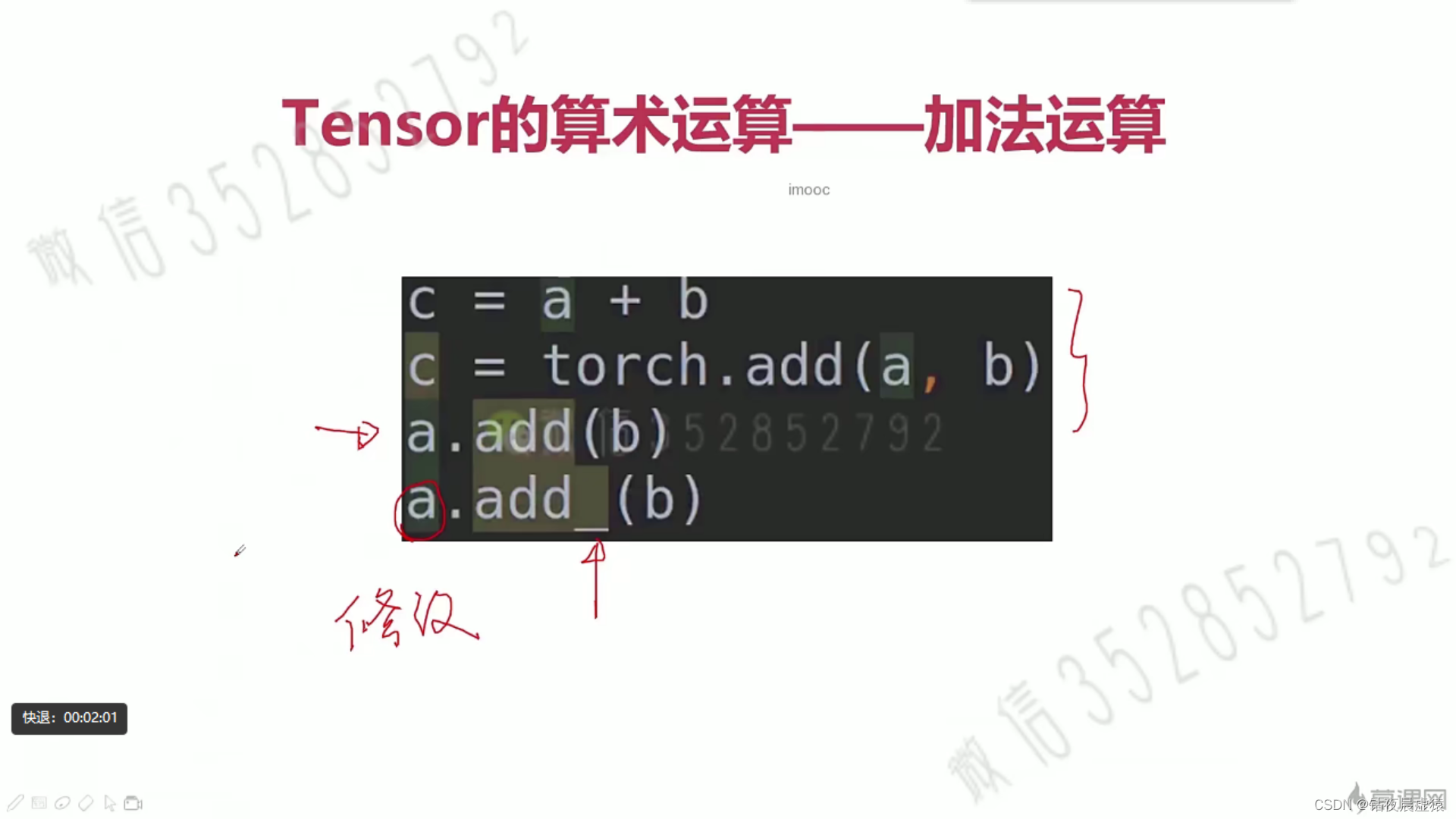

8.Tensor的算术运算编程实例

import torch

# add

a = torch.rand(2, 3)

b = torch.rand(2, 3)

print('a = ', a)

print('b = ', b)

print('====== add result ======')

print(a + b)

print(a.add(b))

print(torch.add(a, b))

print(a)

print(a.add_(b))

print(a)

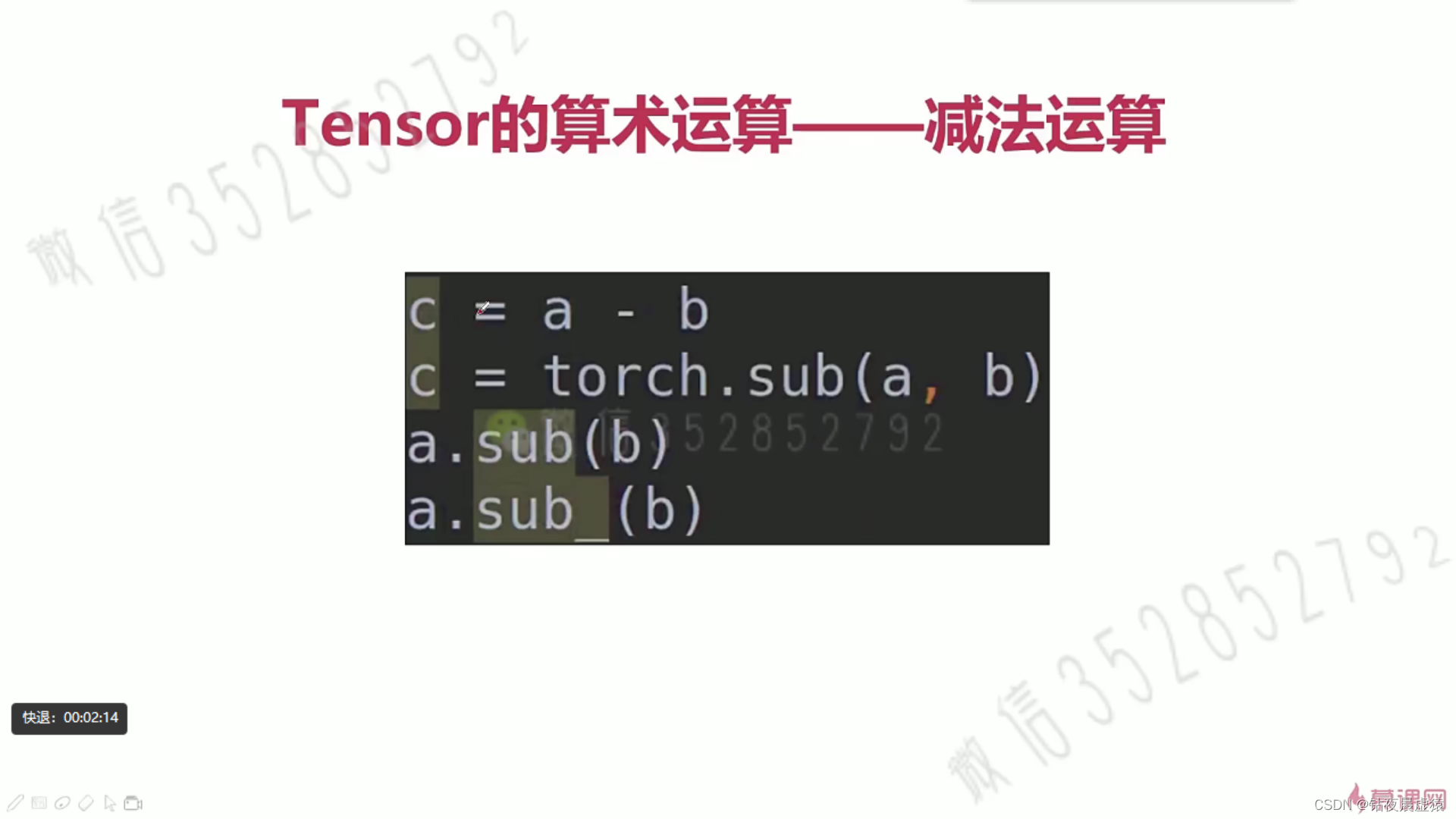

# sub

print('====== sub result ======')

print(a - b)

print(a)

print(a.sub_(b))

print(a)

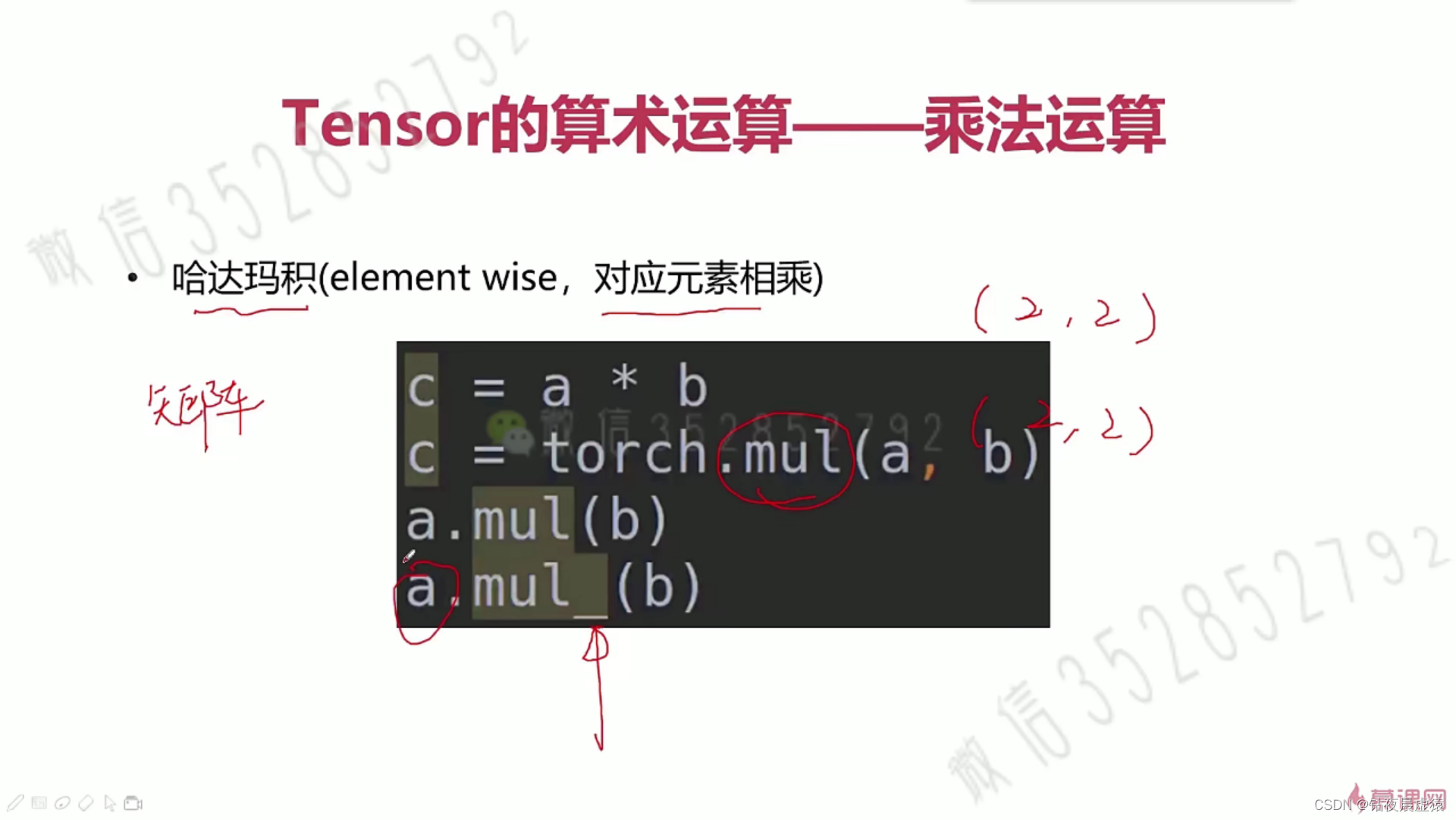

# mul

print('====== mul result ======')

print(a * b)

print(torch.mul(a, b))

print(a.mul(b))

print(a)

print(a.mul_(b))

print(a)

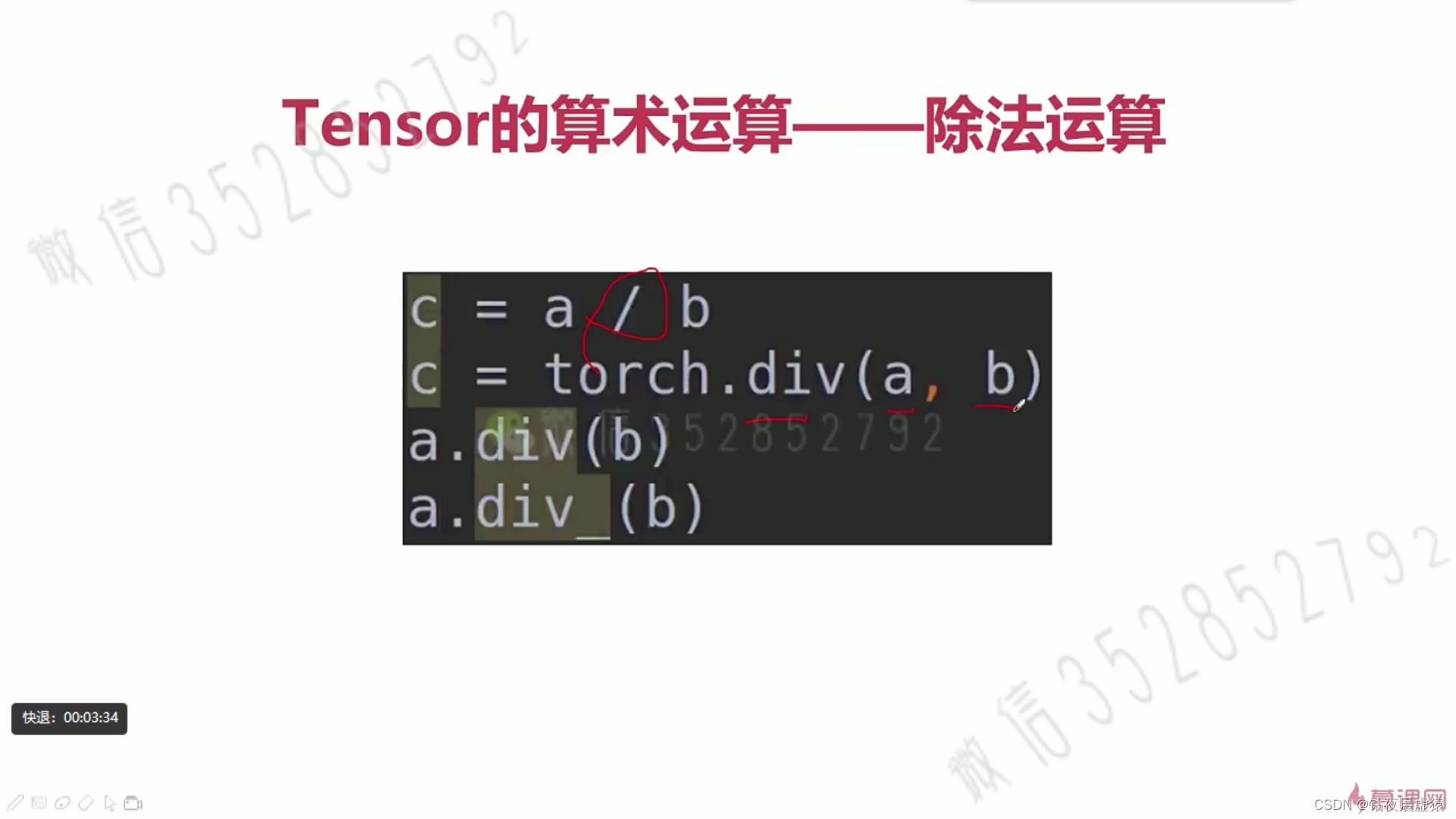

# div

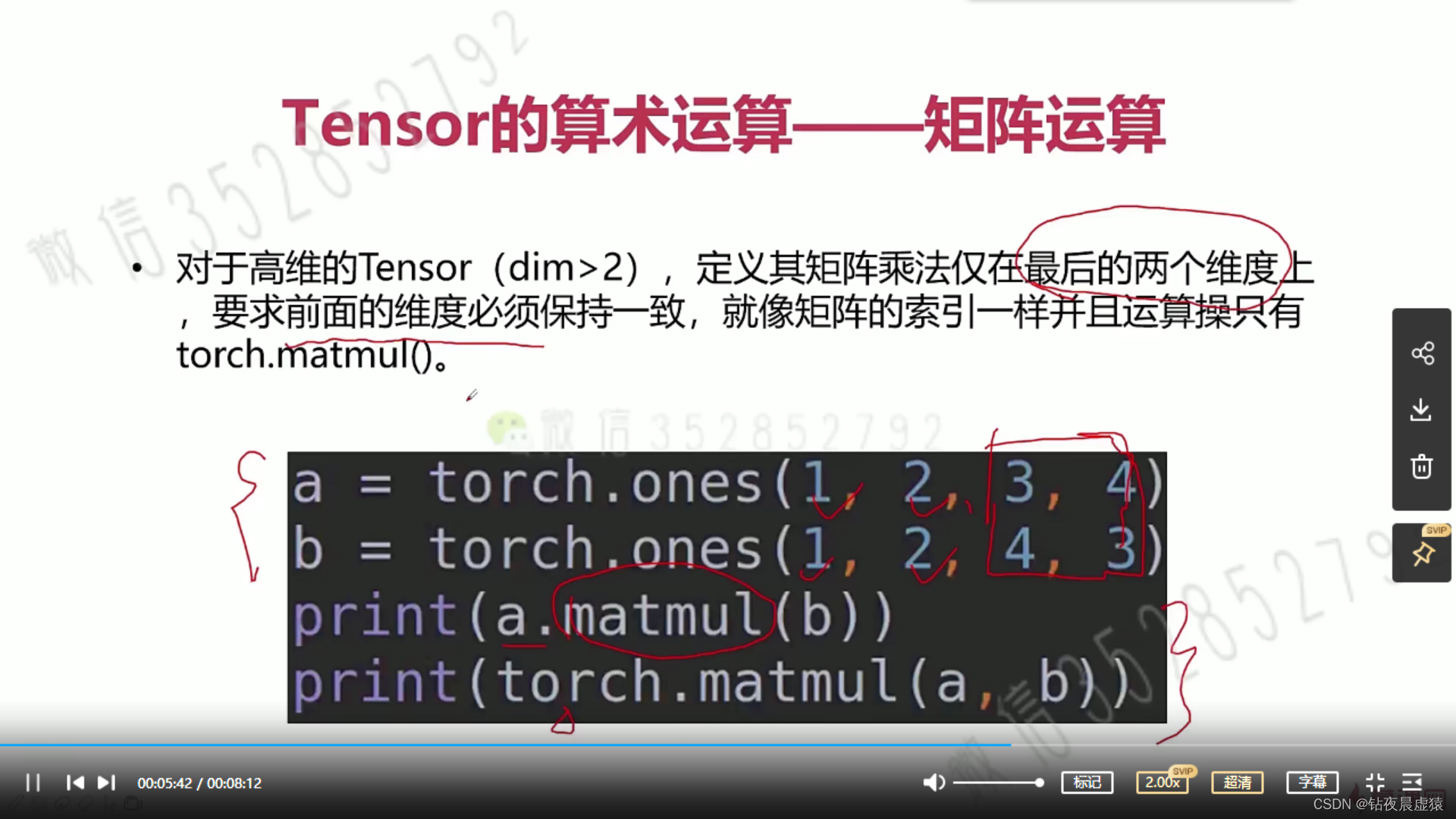

# matmul 矩阵运算

print('====== matmul result ======')

a = torch.ones(2, 1)

b = torch.ones(1, 2)

print(a @ b)

print(torch.matmul(a, b))

print(torch.mm(a, b))

# 高维tensor

a = torch.ones(1, 2, 3, 4)

b = torch.ones(1, 2, 4, 3)

print(a.shape)

print(b.shape)

print(a @ b)

print((a @ b).shape)

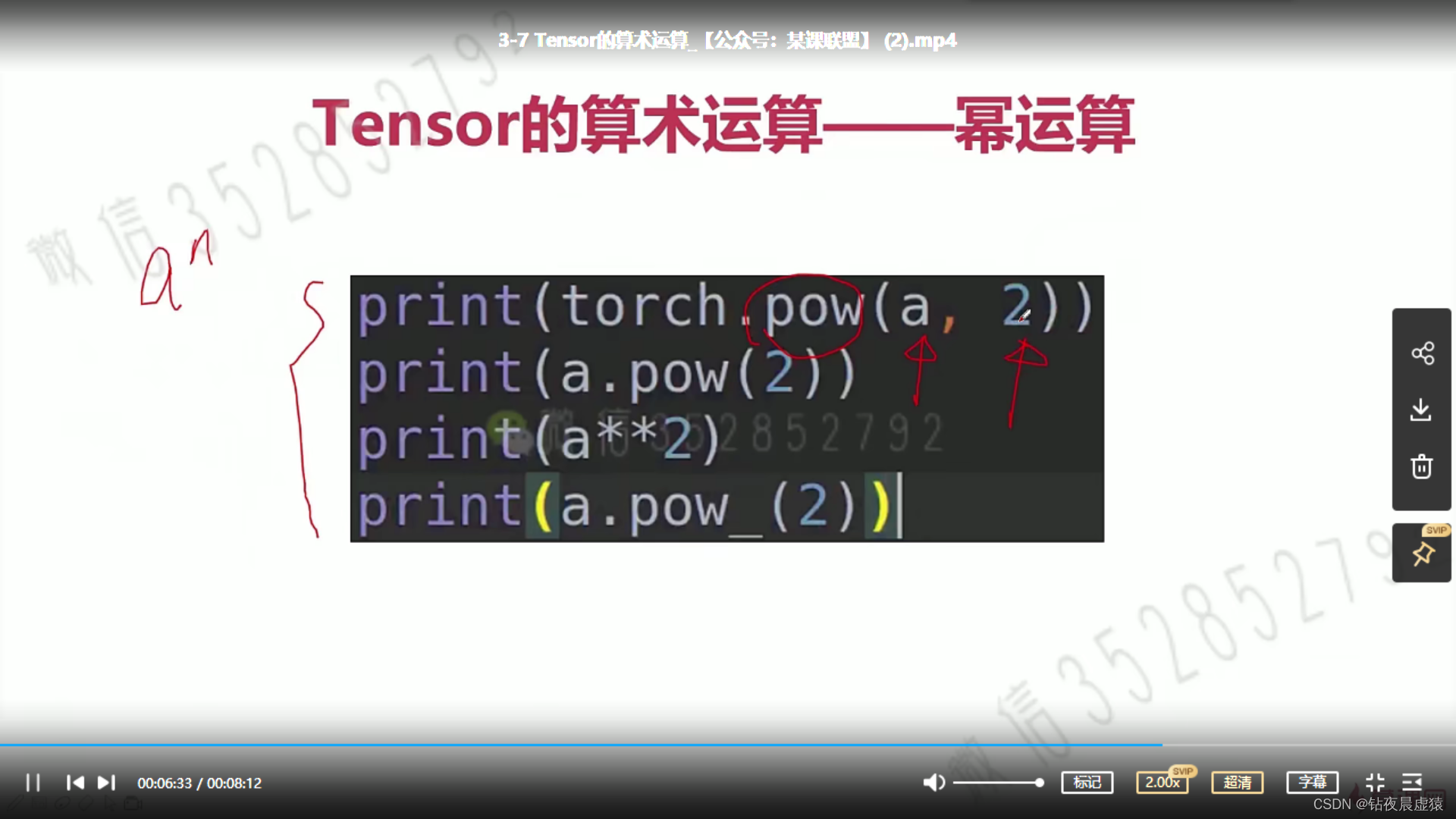

# pow

print('====== pow result ======')

a = torch.tensor([1, 2])

print(a.pow(3))

print(torch.pow(a, 3))

print(a**3)

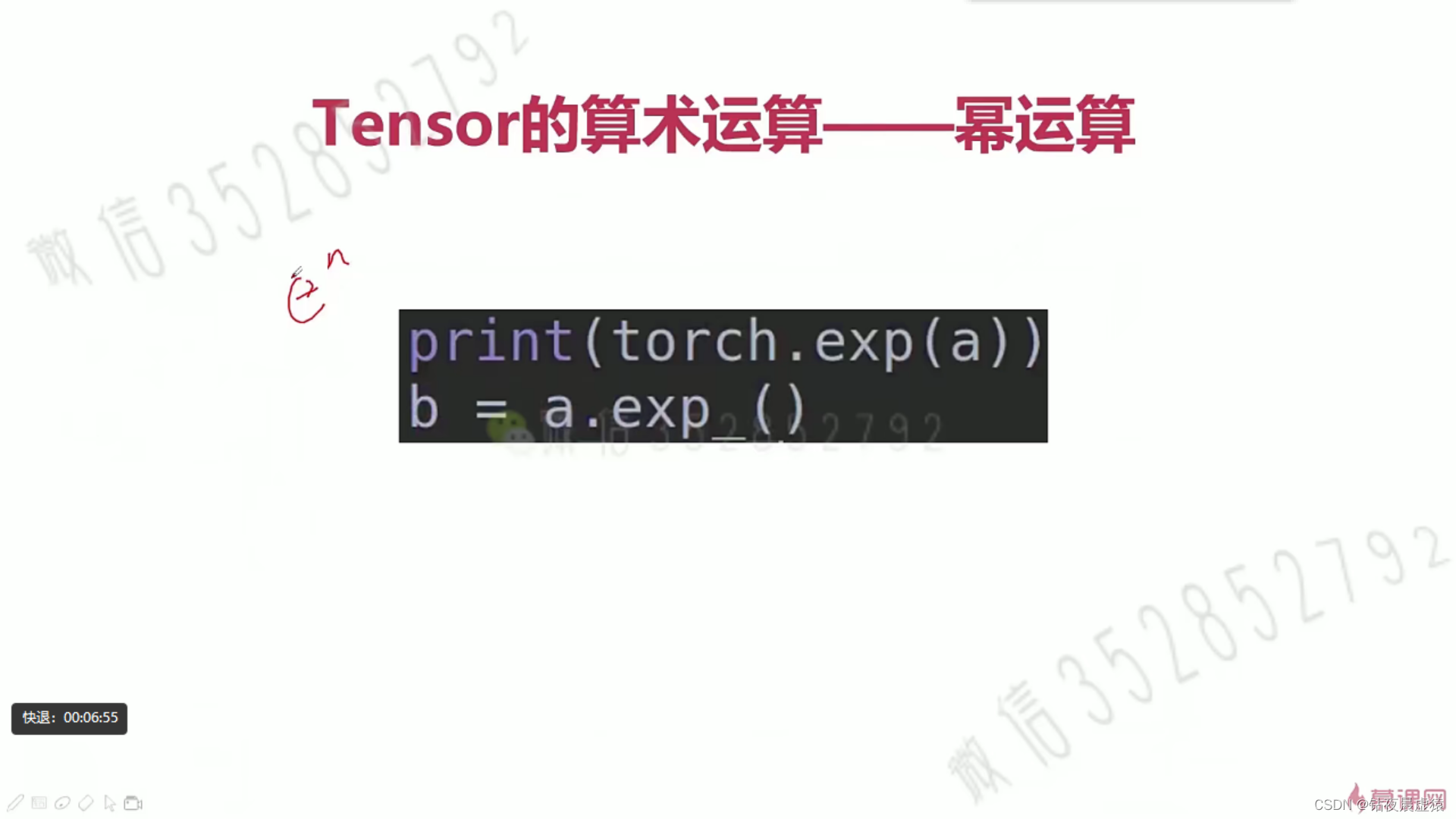

# exp

print('====== exp result ======')

print(torch.exp(a))

print(a.exp())

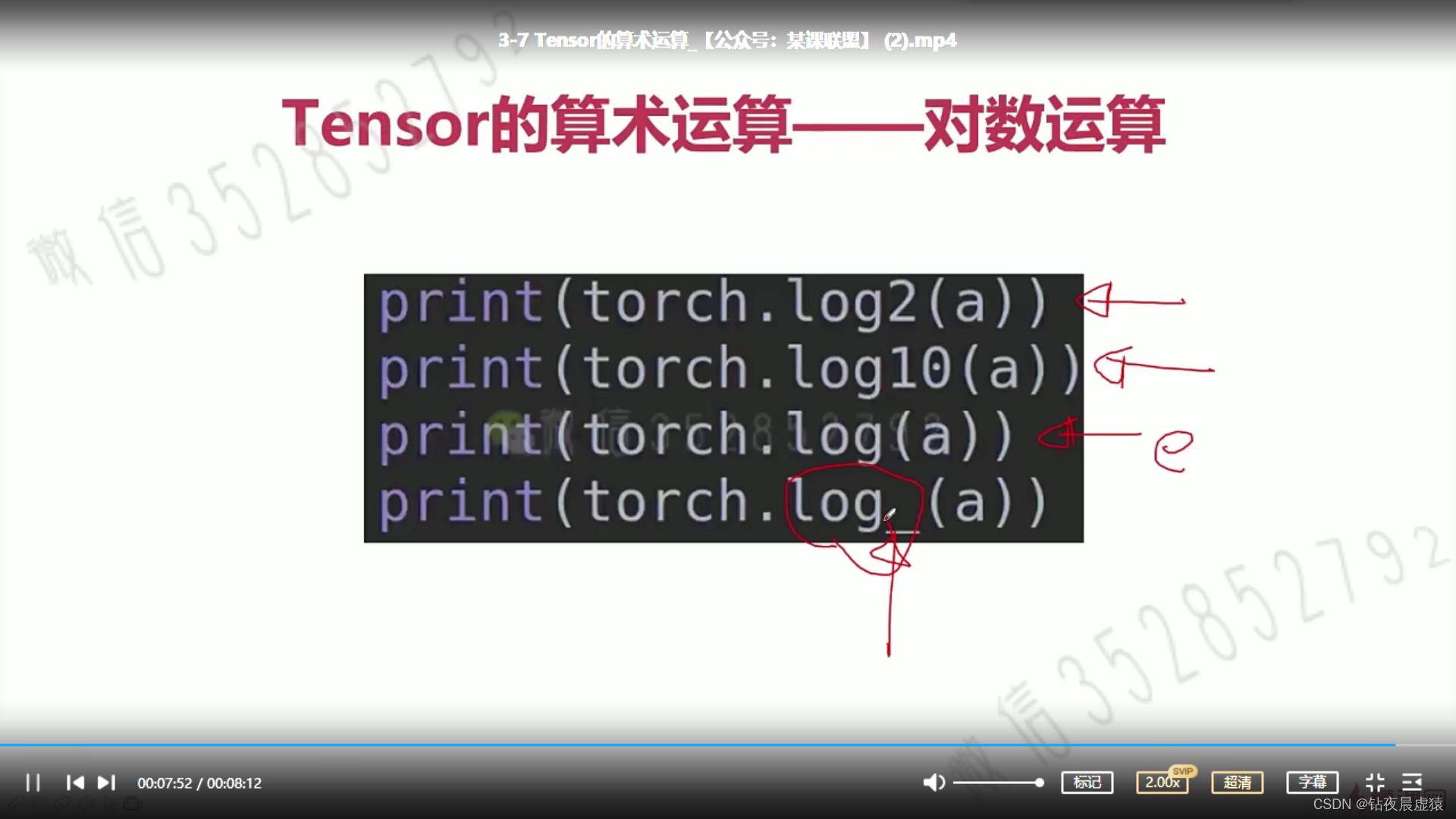

# log

print('====== log result ======')

print(torch.log(a))

print(a.log())

print(torch.tensor(0.6931).exp())

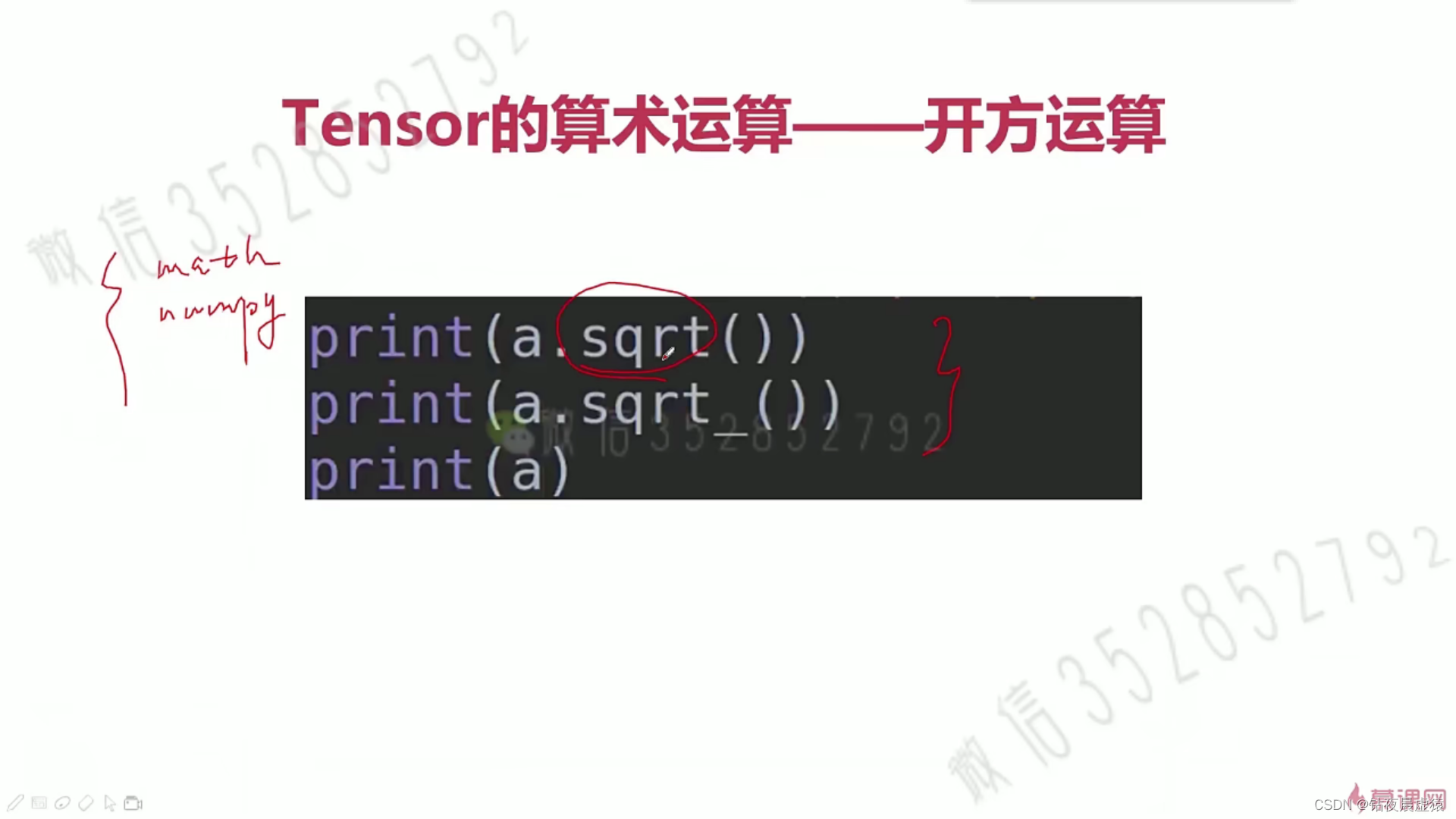

# sqrt

print('====== sqrt result ======')

print(torch.sqrt(a))

print(a.sqrt())

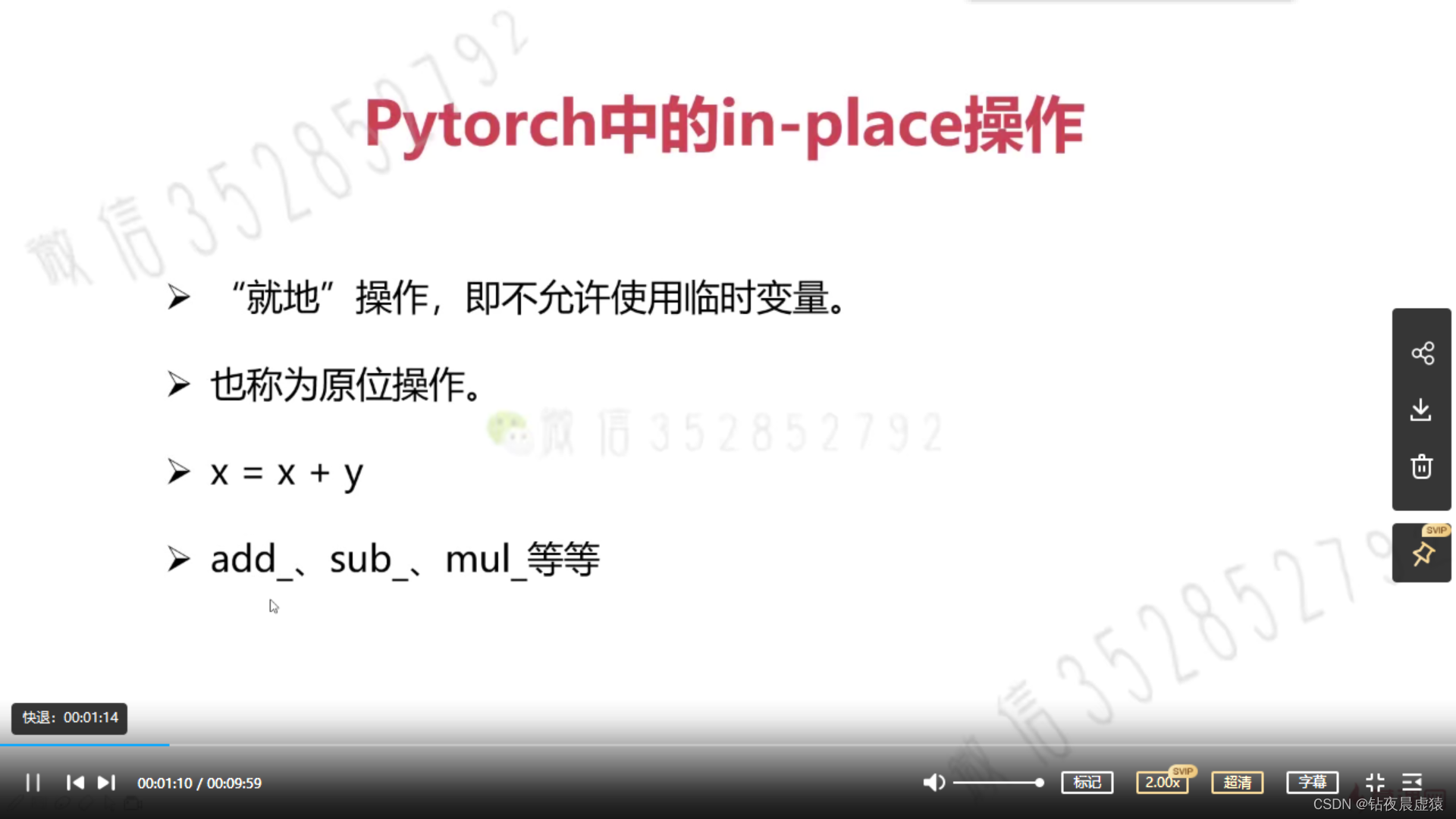

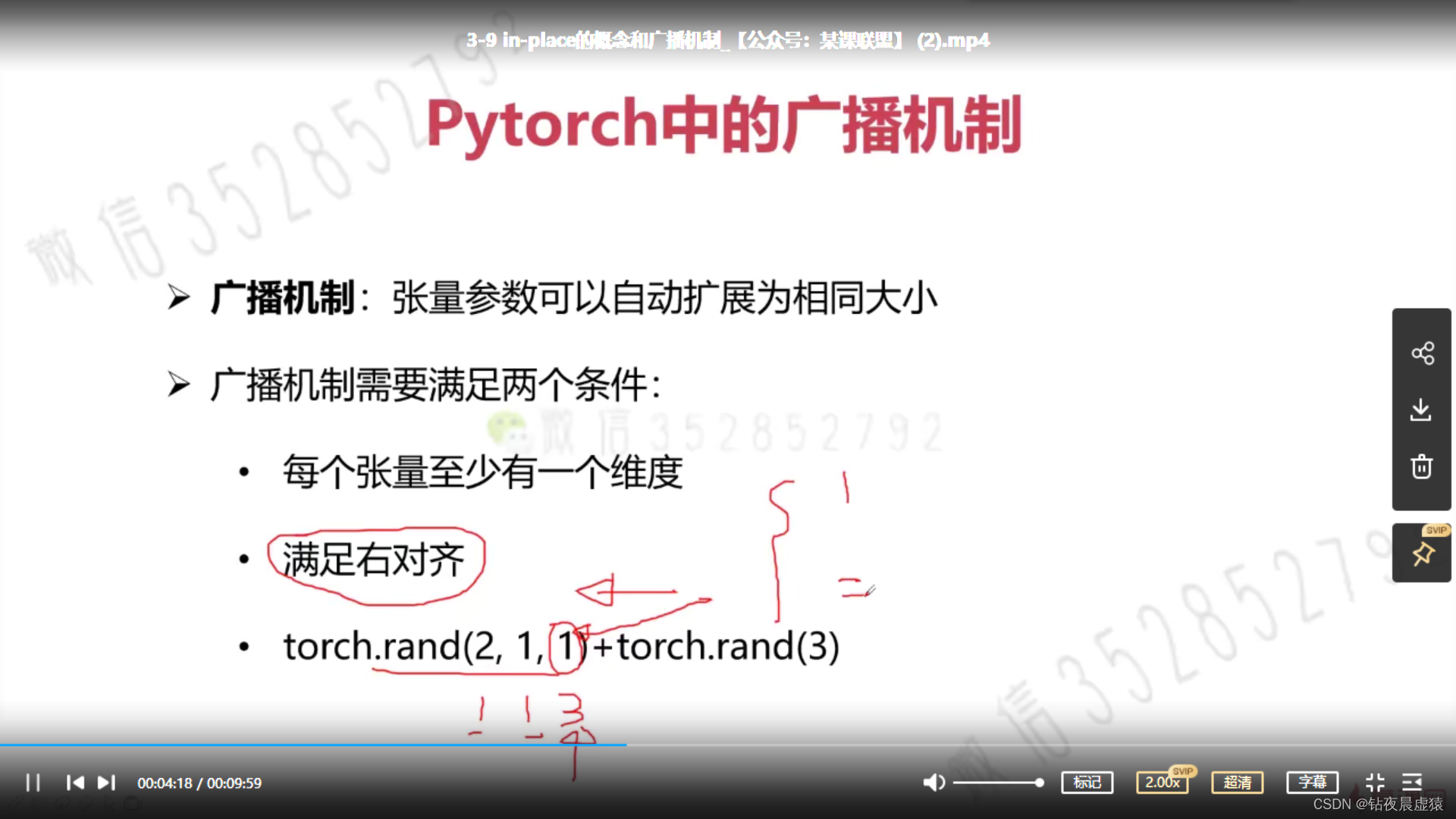

9.in-place的概念和广播机制

import torch

a = torch.rand(2, 3)

b = torch.rand(3)

# a 2 * 1

# b 1 * 3

# c 2 * 3

c = a + b

print(a)

print(b)

print(c)

print(c.shape)

a = torch.rand(2, 1)

b = torch.rand(1, 2)

# a 2 * 1

# b 1 * 2

# c 2 * 2

c = a + b

print(a)

print(b)

print(c)

print(c.shape)

a = torch.rand(2, 1, 1, 3)

b = torch.rand(4, 2, 3)

# a 2 * 1 * 1 * 3

# b 4 * 2 * 3

# c 2 * 4 * 2 * 3

c = a + b

print(a)

print(b)

print(c)

print(c.shape)

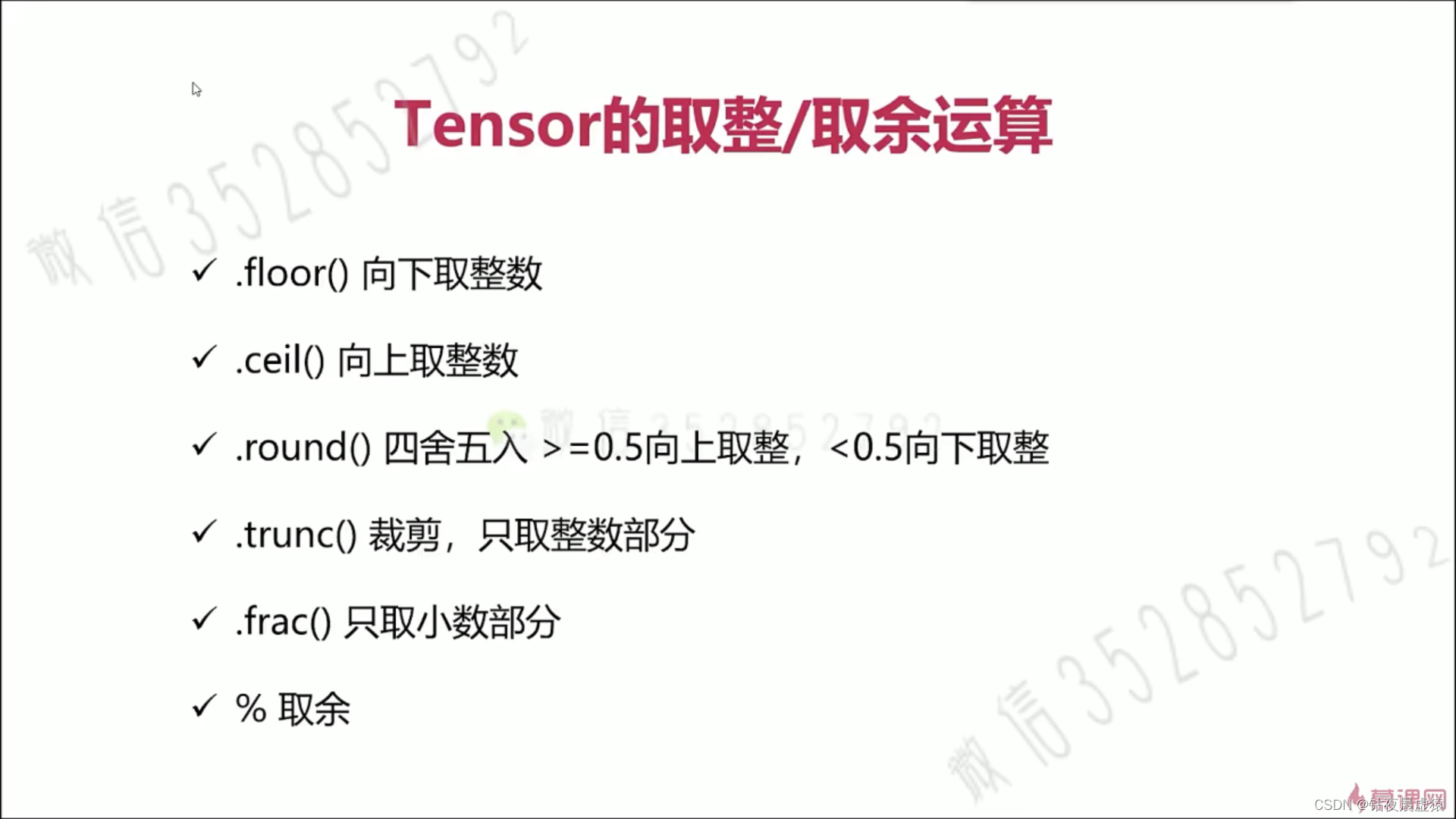

10.取整/余

import torch

a = torch.rand(2, 2)

a *= 10

print(a)

print(a.floor())

print(torch.ceil(a))

print(torch.round(a))

print(a.trunc()) # 取整数部分

print(torch.frac(a))# 取小数部分

print(a % 2)

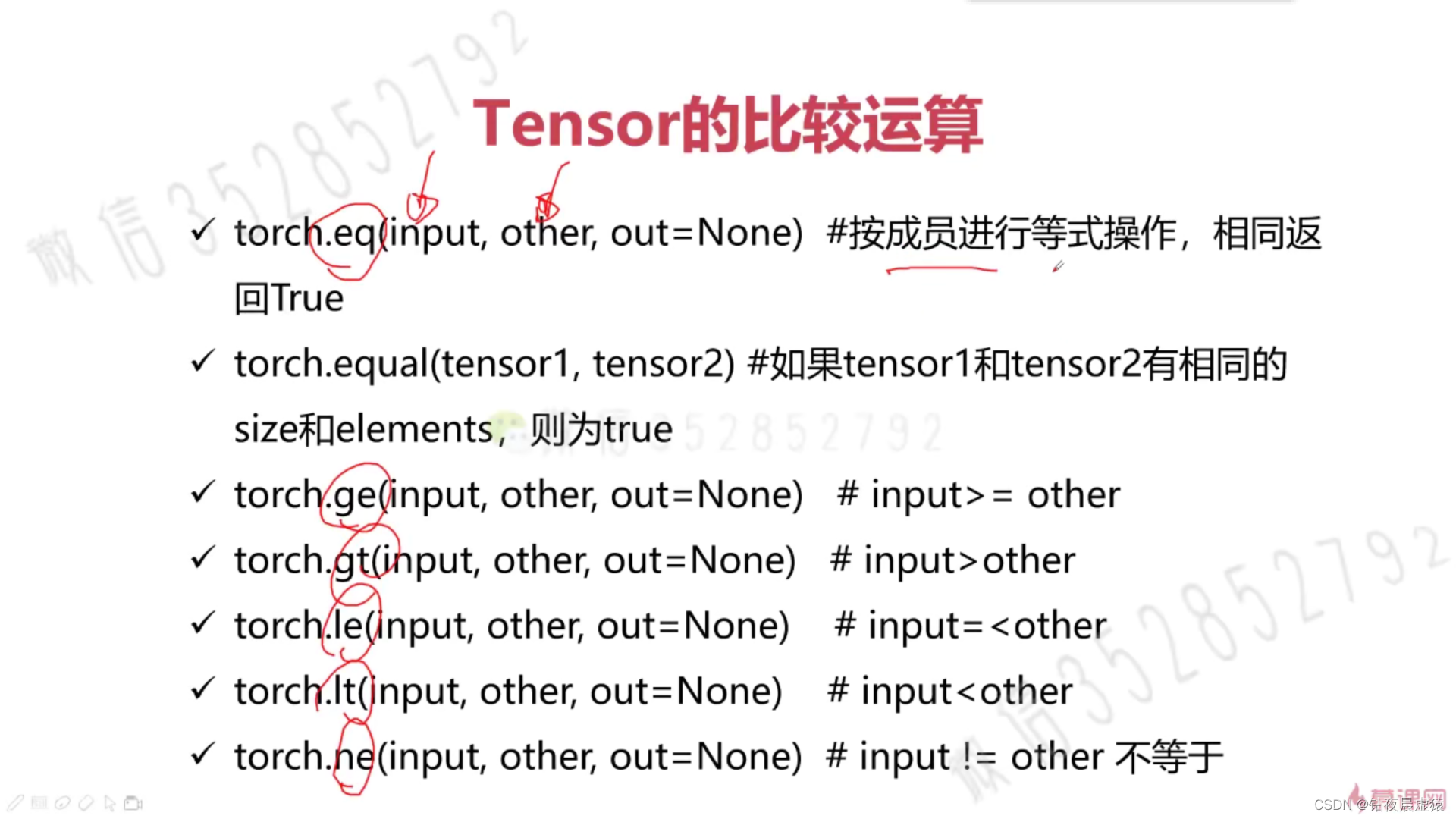

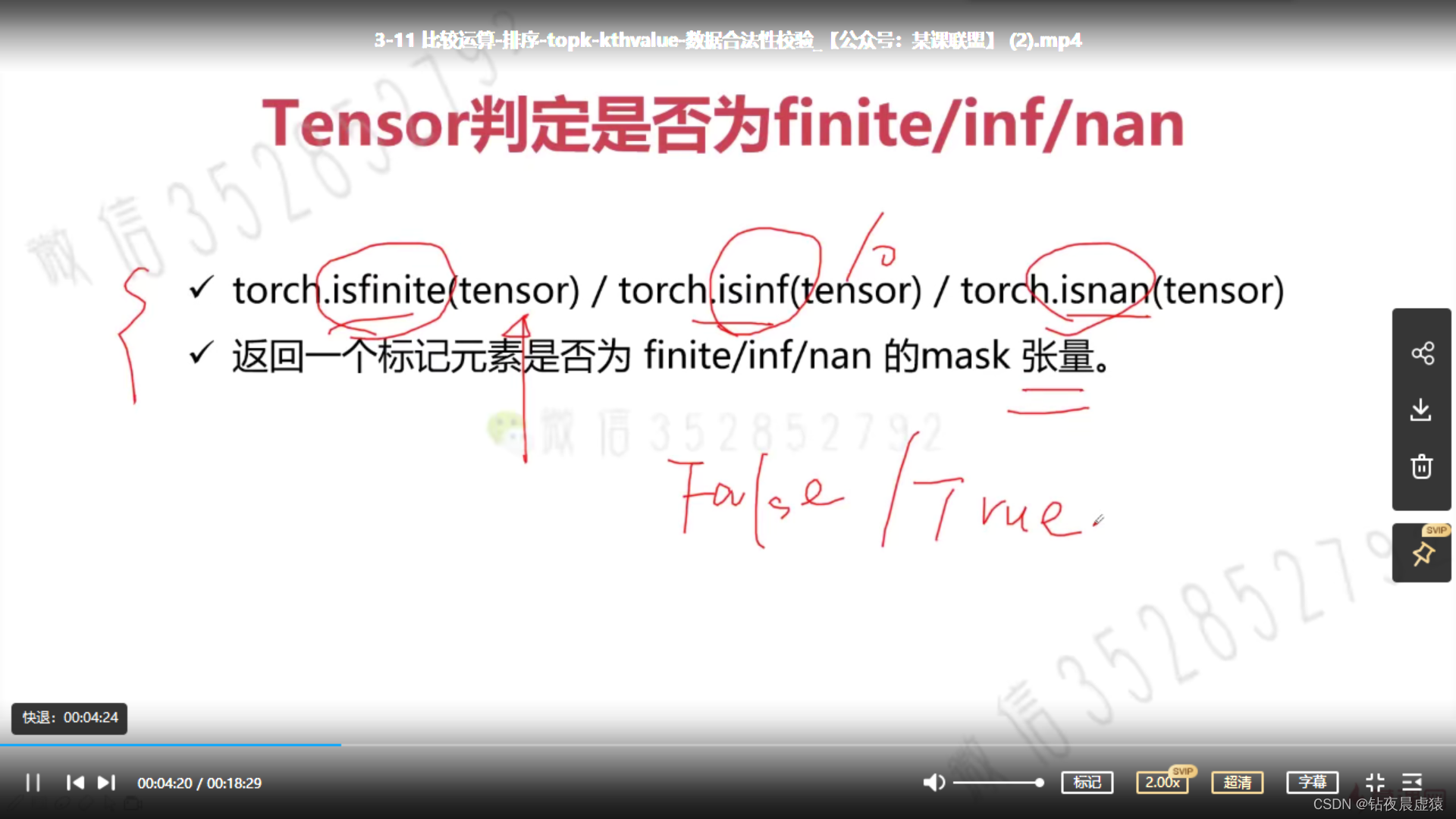

11.比较运算-排序-kthvalue-数据合法性校验

import torch

a = torch.rand(2, 3)

b = torch.rand(2, 3)

print(a)

print(b)

'''比较'''

print(torch.eq(a, b))

print(torch.equal(a, b))

print(torch.ge(a, b))

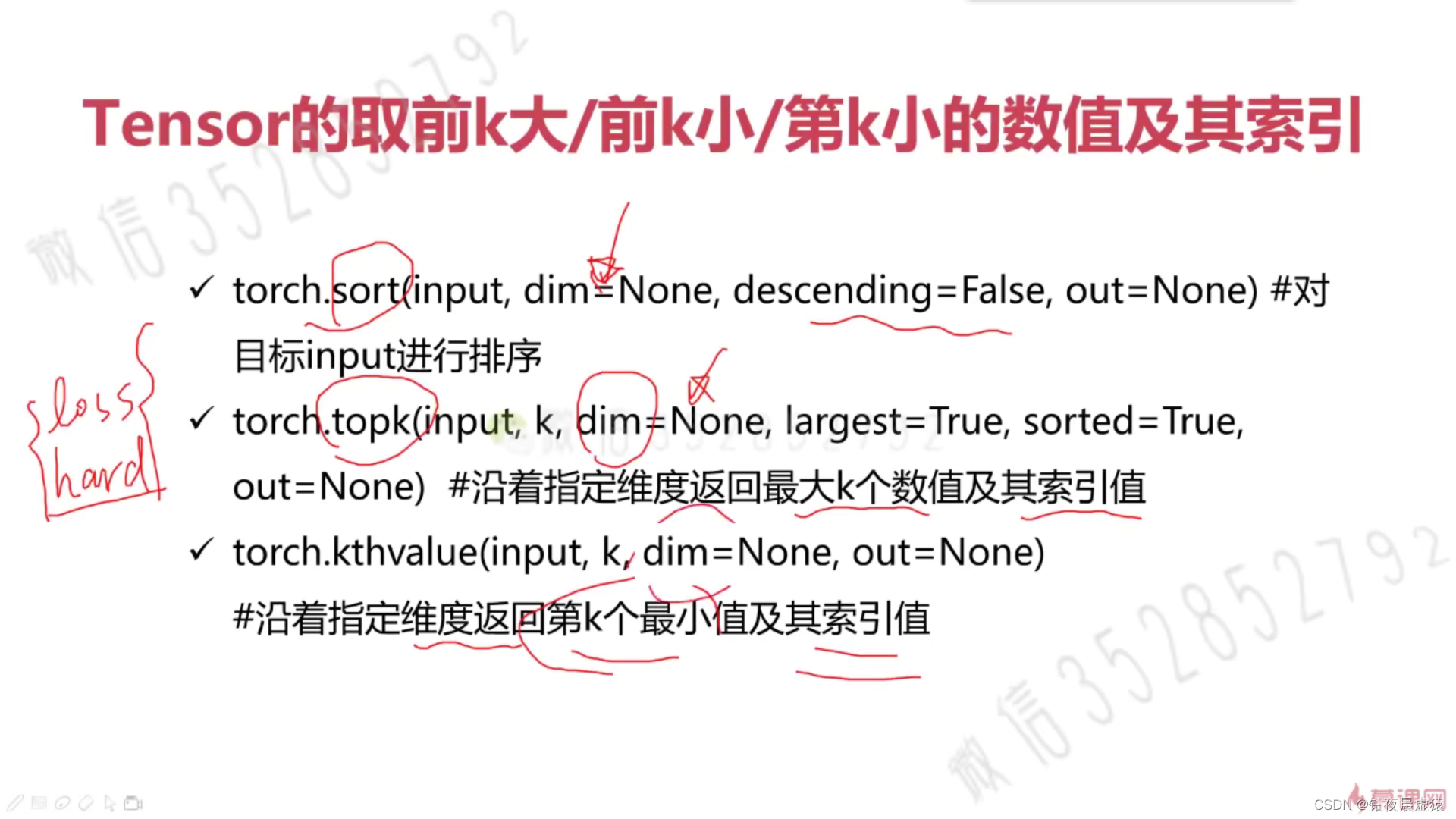

'''排序'''

a = torch.tensor([[1, 4, 4, 3, 5],

[2, 3, 1, 3, 5]])

print(a.shape)

print(torch.sort(a, dim = 1, descending=False))

'''topk'''

a = torch.tensor([[2, 4, 3, 1, 5],

[2, 3, 5, 1, 4]])

print(a.shape)

print(torch.topk(a, k = 1, dim = 0))

print(torch.topk(a, k = 2, dim = 0))

print(torch.topk(a, k = 1, dim = 1))

print(torch.topk(a, k = 2, dim = 1))

print(torch.kthvalue(a, k = 2, dim = 0)) # 输出第二小的数

print(torch.kthvalue(a, k = 2, dim = 1))

a = torch.rand(2, 3)

print(a)

print(torch.isfinite(a))

print(torch.isfinite(a/0))

print(torch.isinf(a/0))

print(torch.isnan(a))

import numpy as np

a = torch.tensor([1, 2, np.nan])

print(torch.isnan(a))

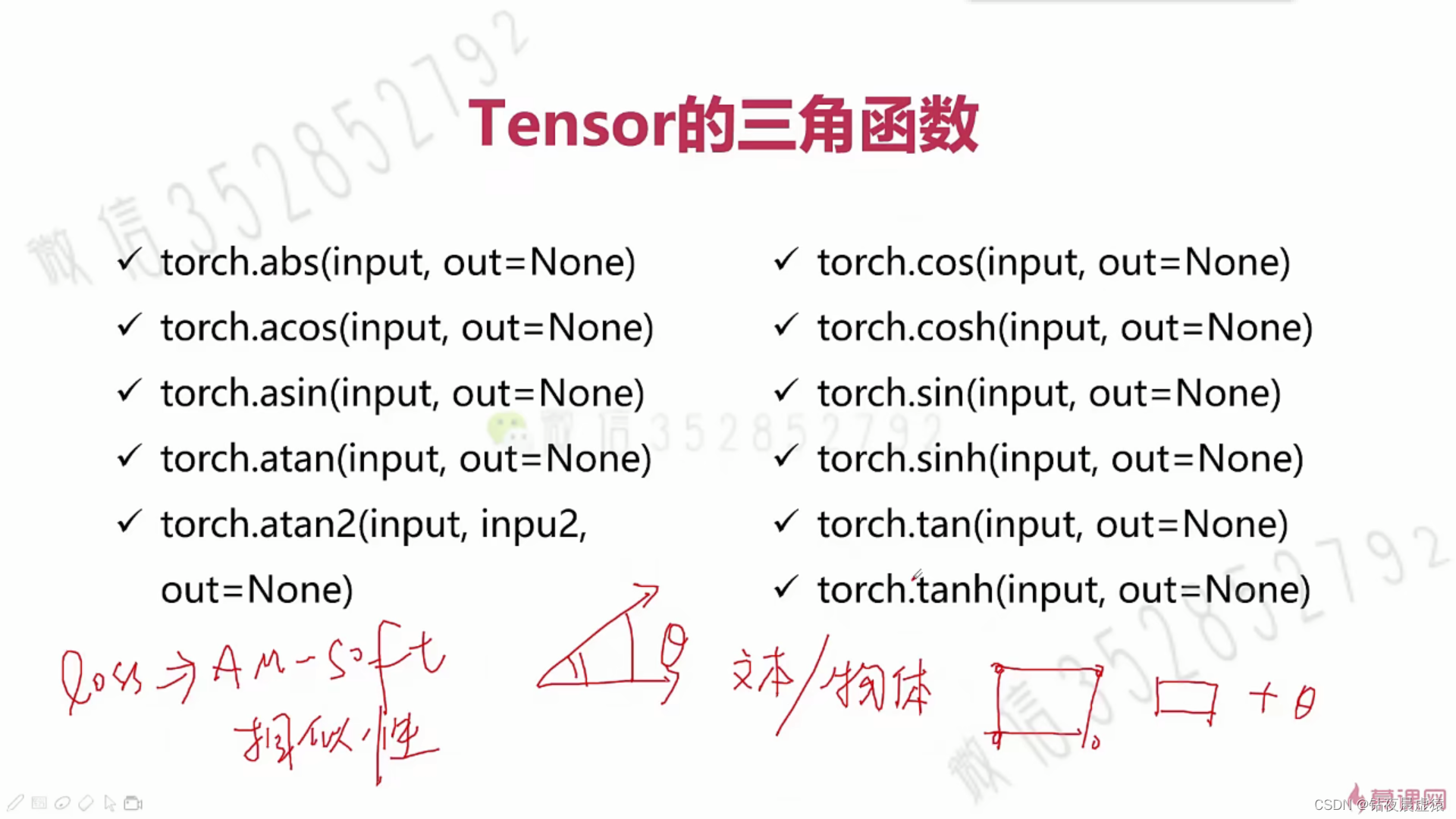

12.三角函数

import torch

a = torch.zeros(2, 3)

b = torch.cos(a)

print(a)

print(b)

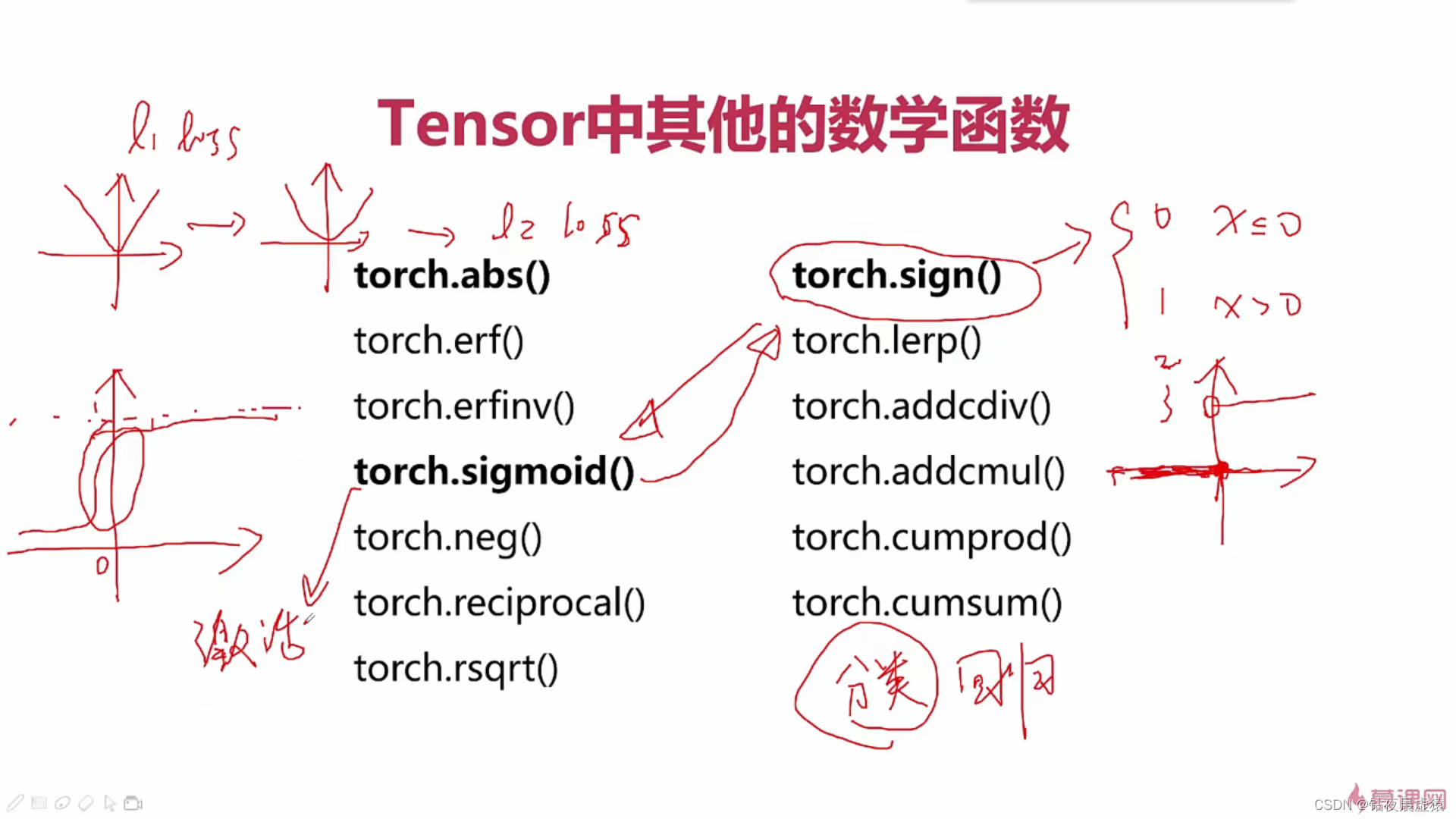

13.其他数学函数

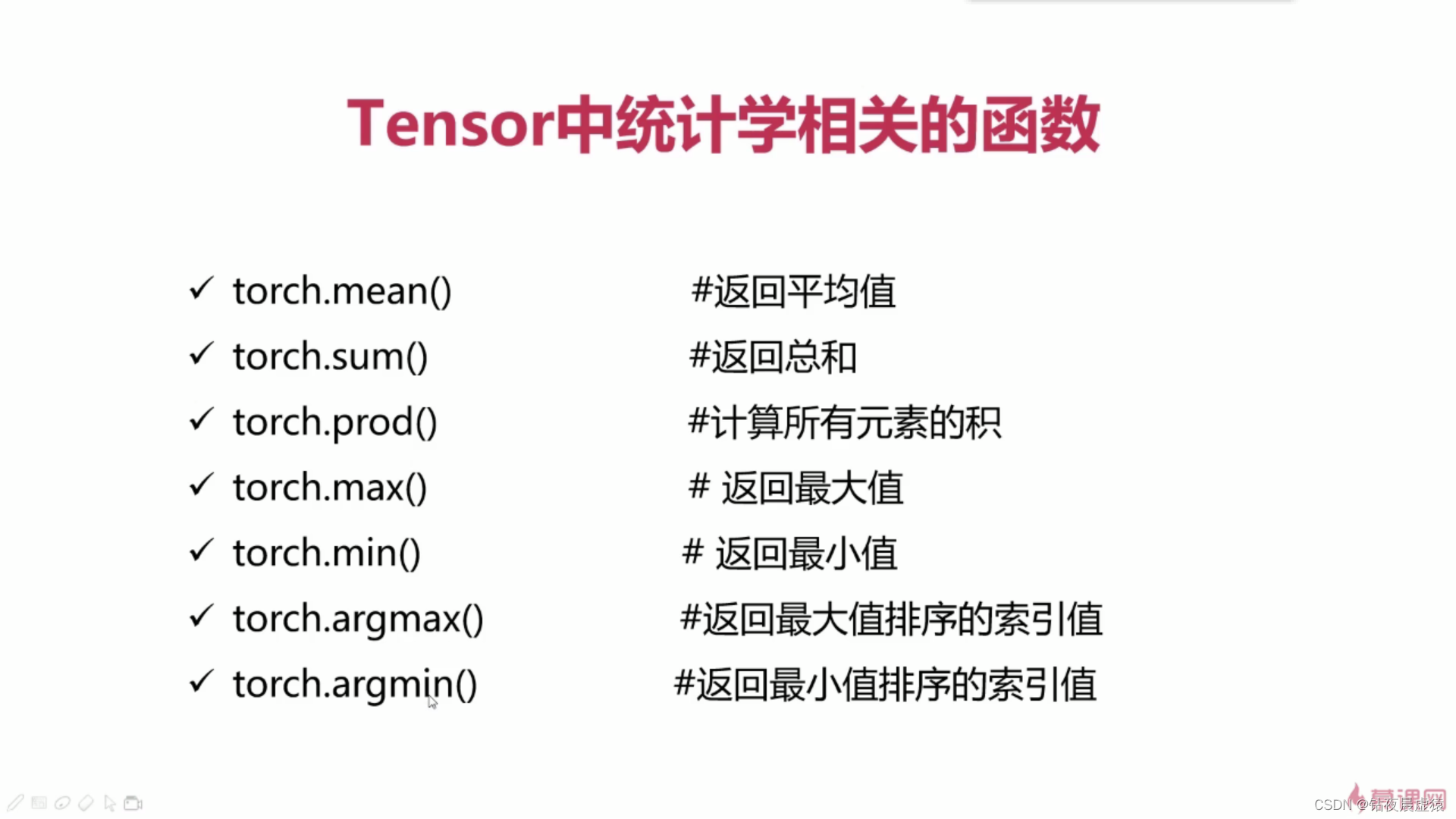

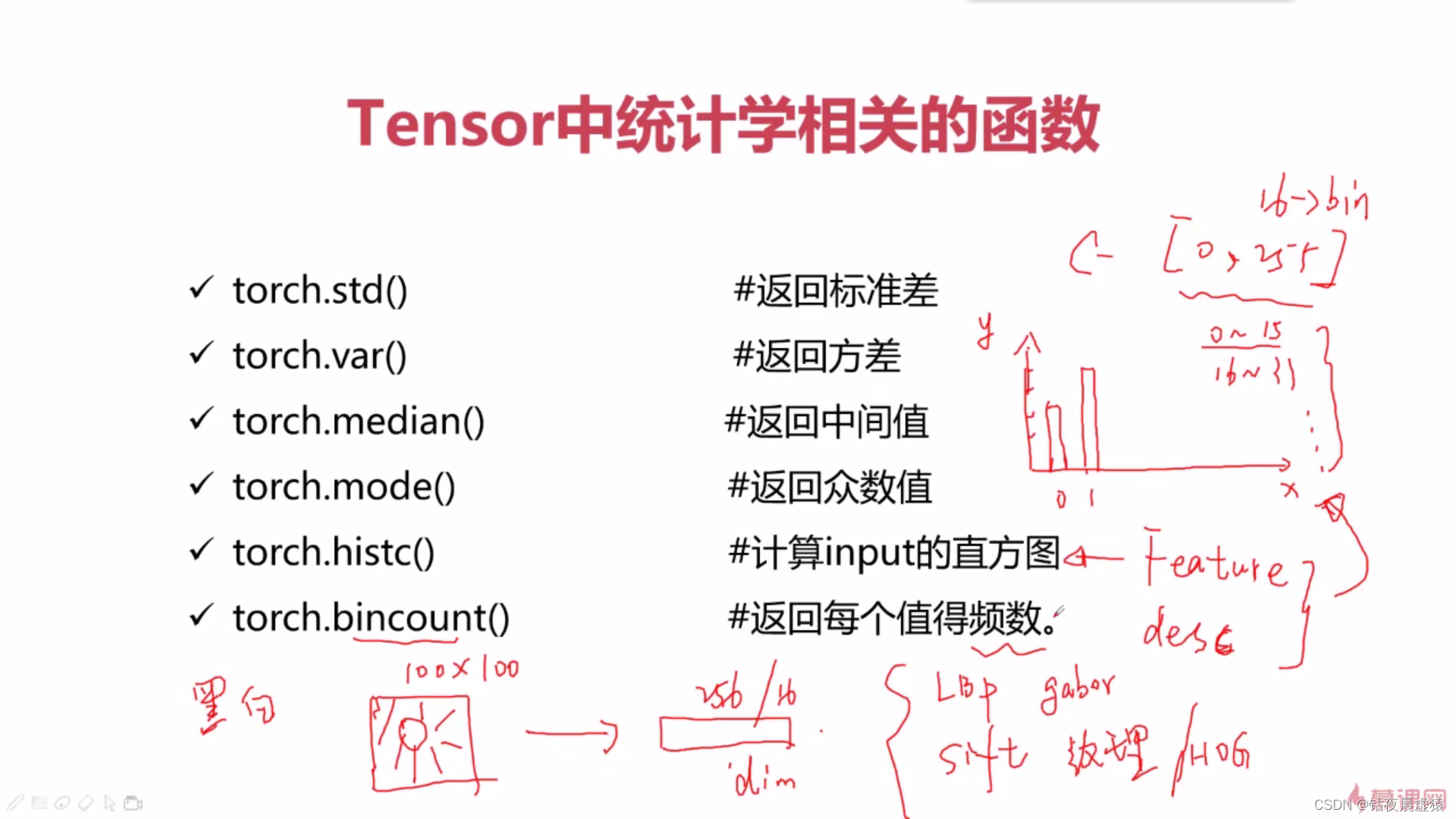

14.PyTorch与统计学方法

import torch

a = torch.rand(2, 3)

print(a)

print(torch.mean(a, dim=0))

print(torch.sum(a, dim=0))

print(torch.prod(a, dim=0)) # 计算所有元素的积

print(torch.argmax(a, dim=0))

print(torch.argmin(a, dim=0))

print(torch.std(a)) # 标准差

print(torch.var(a)) # 方差

print(torch.median(a)) # 中位数

print(torch.mode(a)) # 众数

a = torch.rand(2, 2) * 10

print(a)

print(torch.histc(a, 6, 0, 0)) # 计算a的直方图

a = torch.randint(0, 10, [10])

print(a)

print(torch.bincount(a)) # 返回每个值的频数

# bincount 可以用来统计某一类别样本的个数

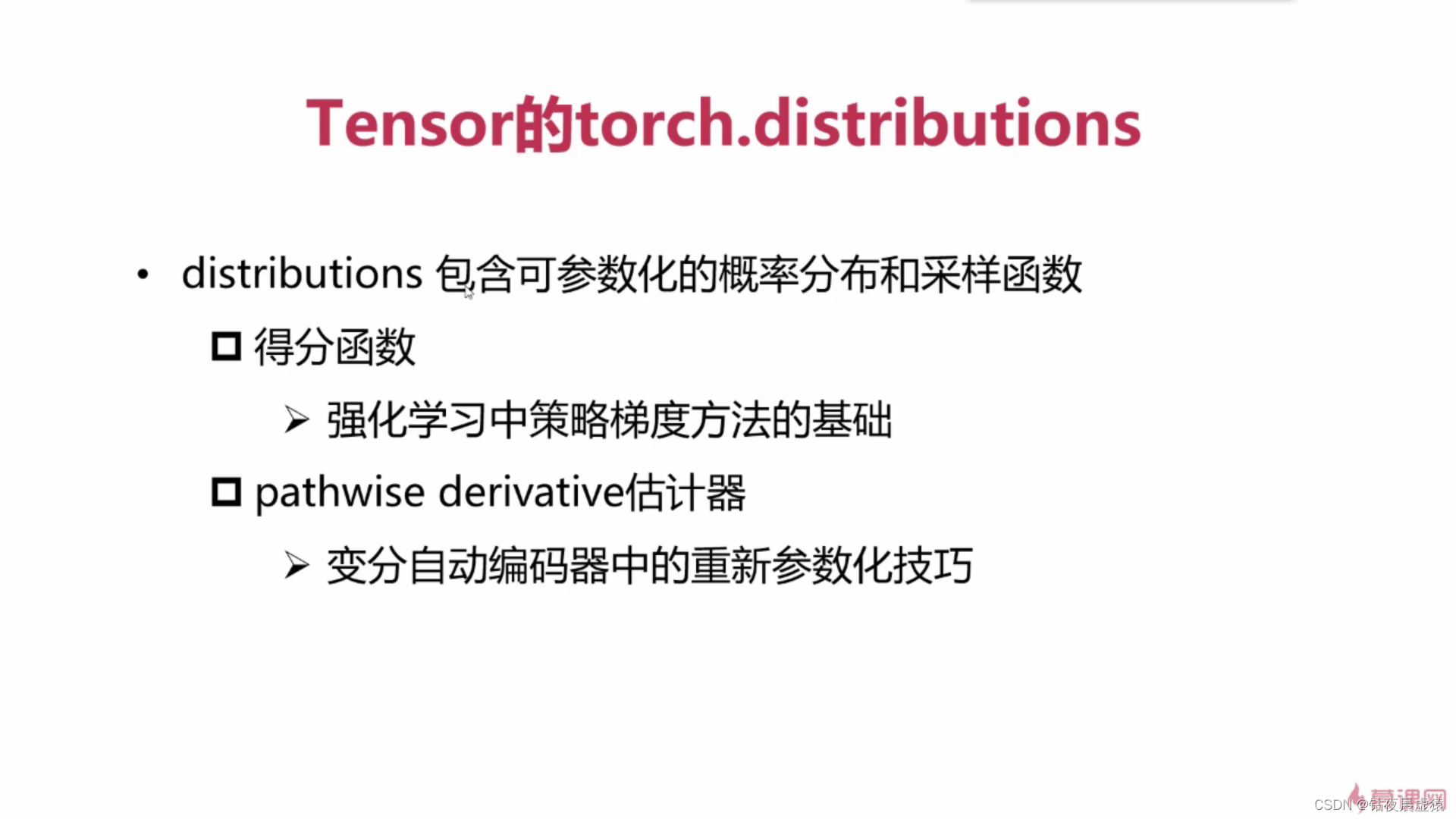

15.PyTorch与分布函数

16.PyTorch与随机抽样

import torch

torch.manual_seed(1)

mean = torch.rand(1, 2)

std = torch.rand(1, 2)

print(torch.normal(mean, std))

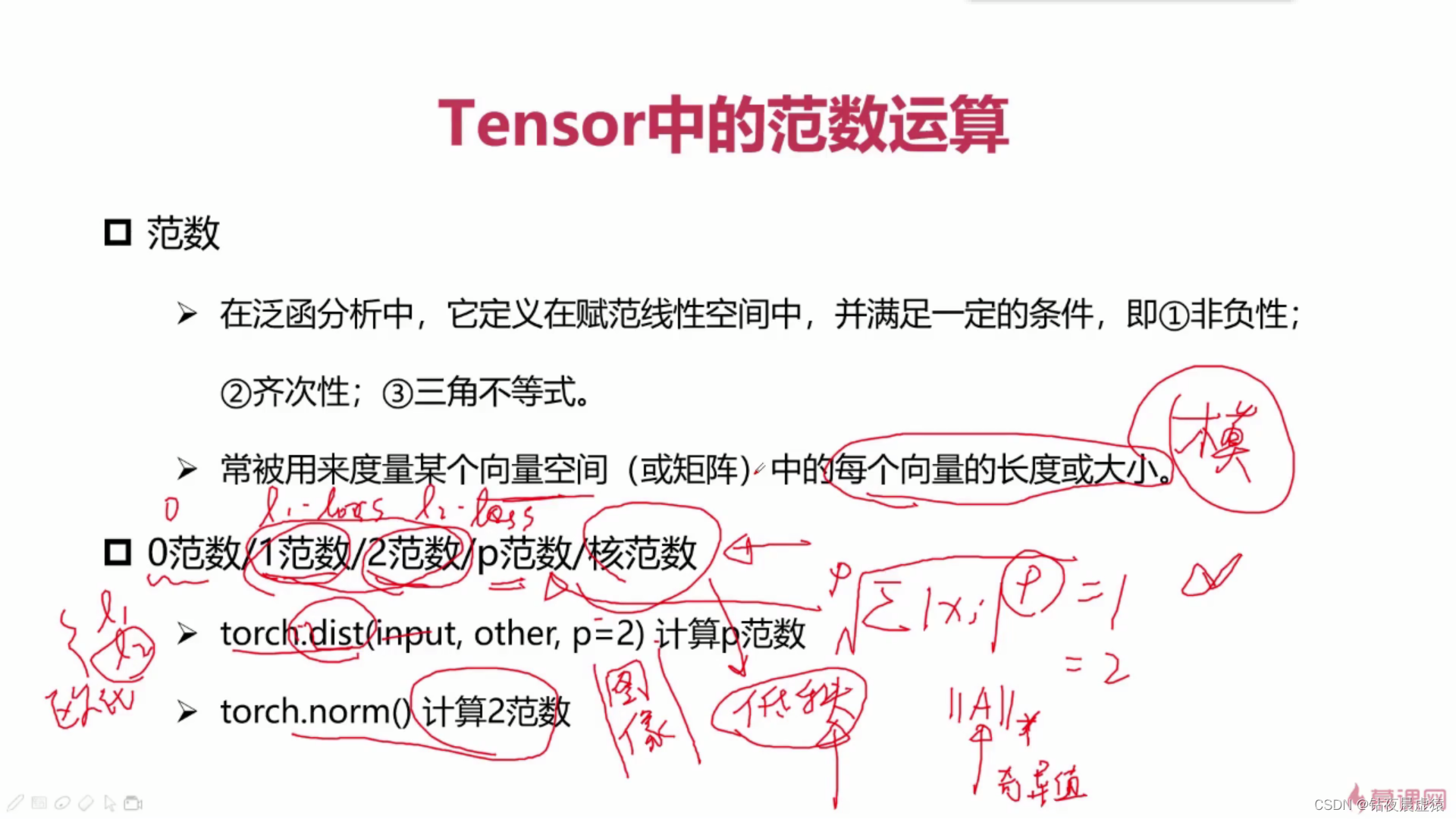

17.PyTorch与线性代数运算

import torch

a = torch.rand(2, 3)

b = torch.rand(2, 3)

print(a)

print(b)

print(torch.dist(a, b, p=1))

print(torch.dist(a, b, p=2))

print(torch.dist(a, b, p=3))

print(torch.norm(a)) # 打印出a的2范数

print(torch.norm(a, p=1)) # 打印出a的1范数

print(torch.norm(a, p='fro'))# 打印出a的核范数

print(torch.norm(a, p=0))

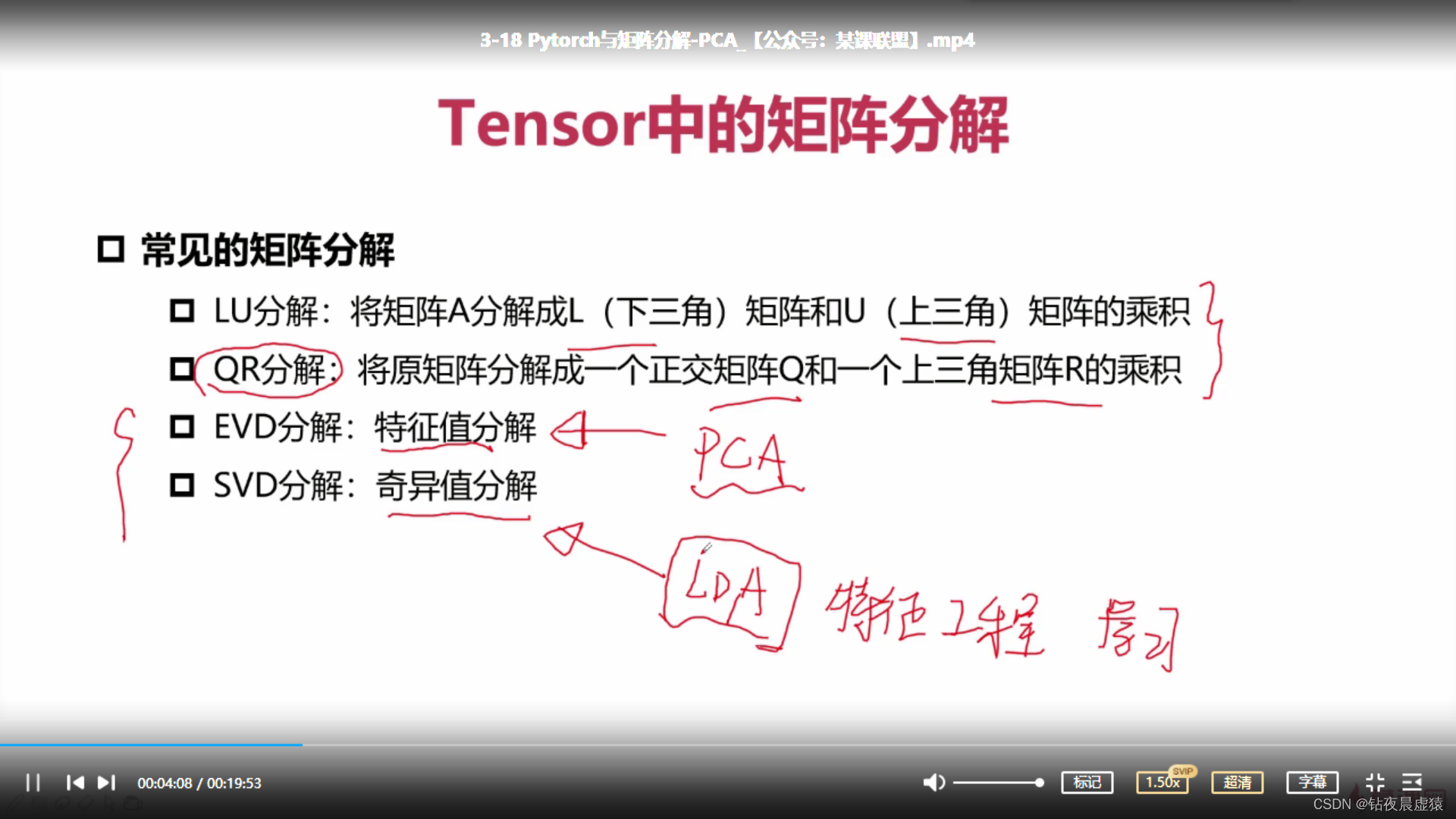

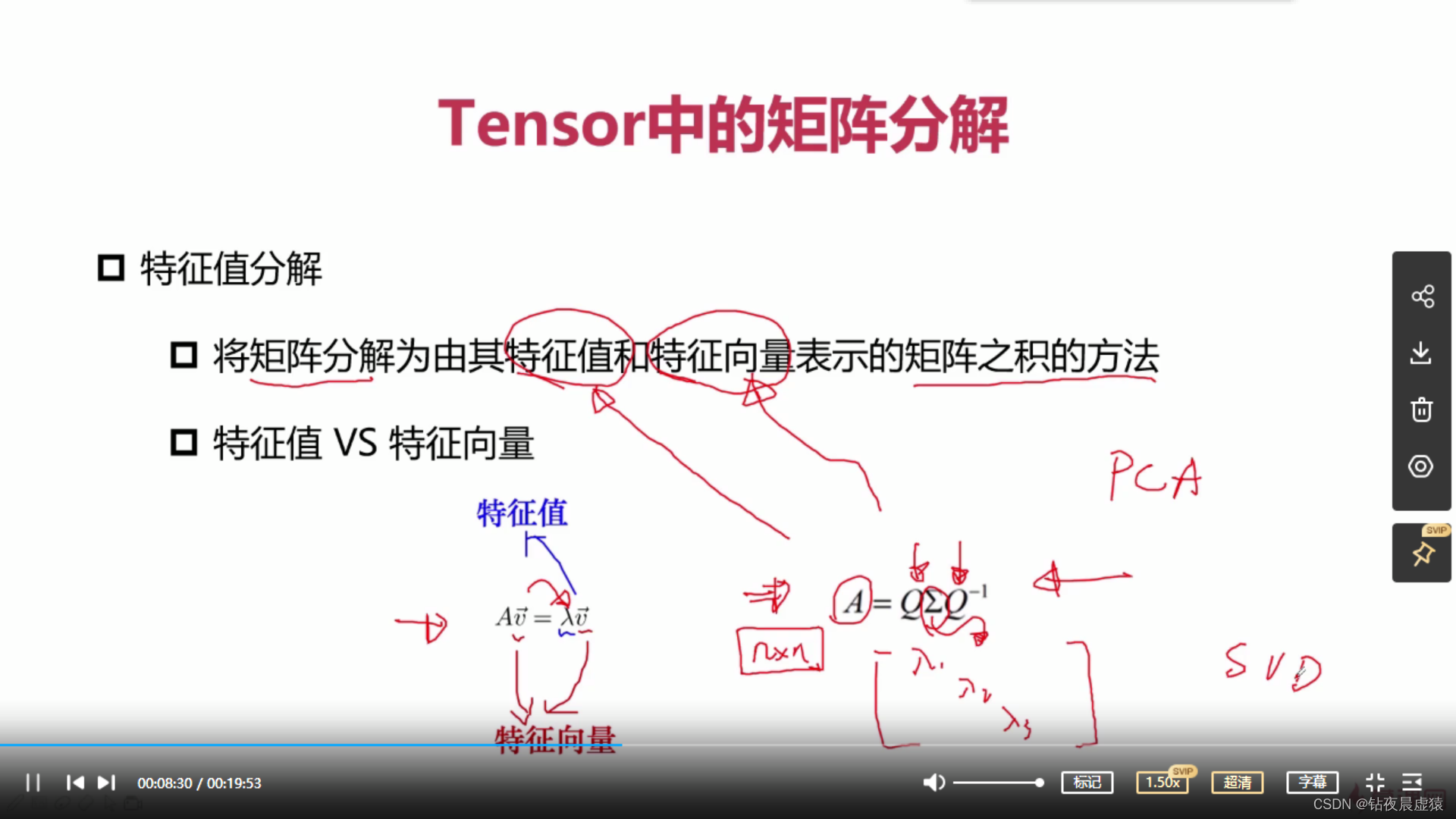

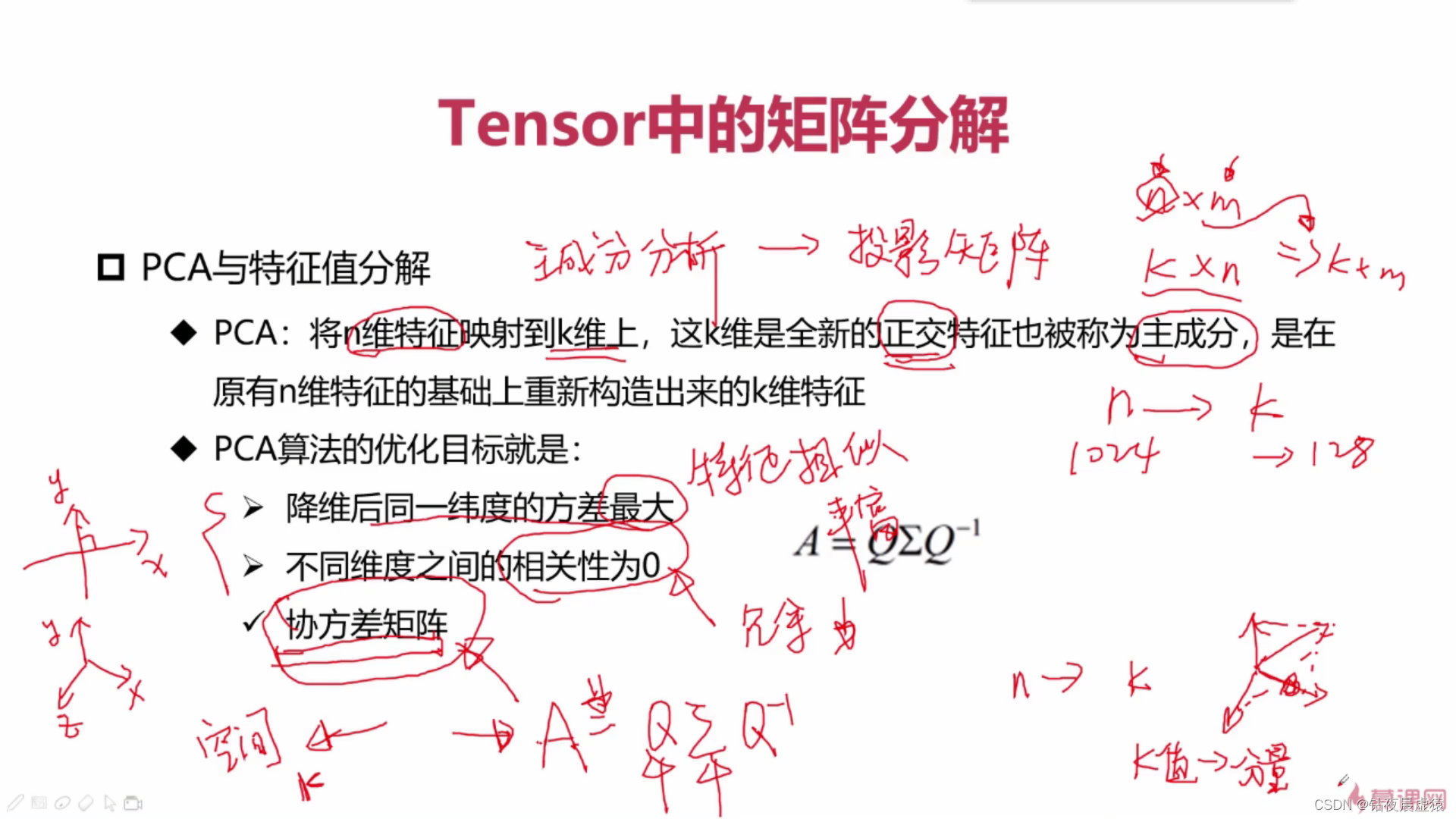

18.PyTorch与矩阵分解-PCA

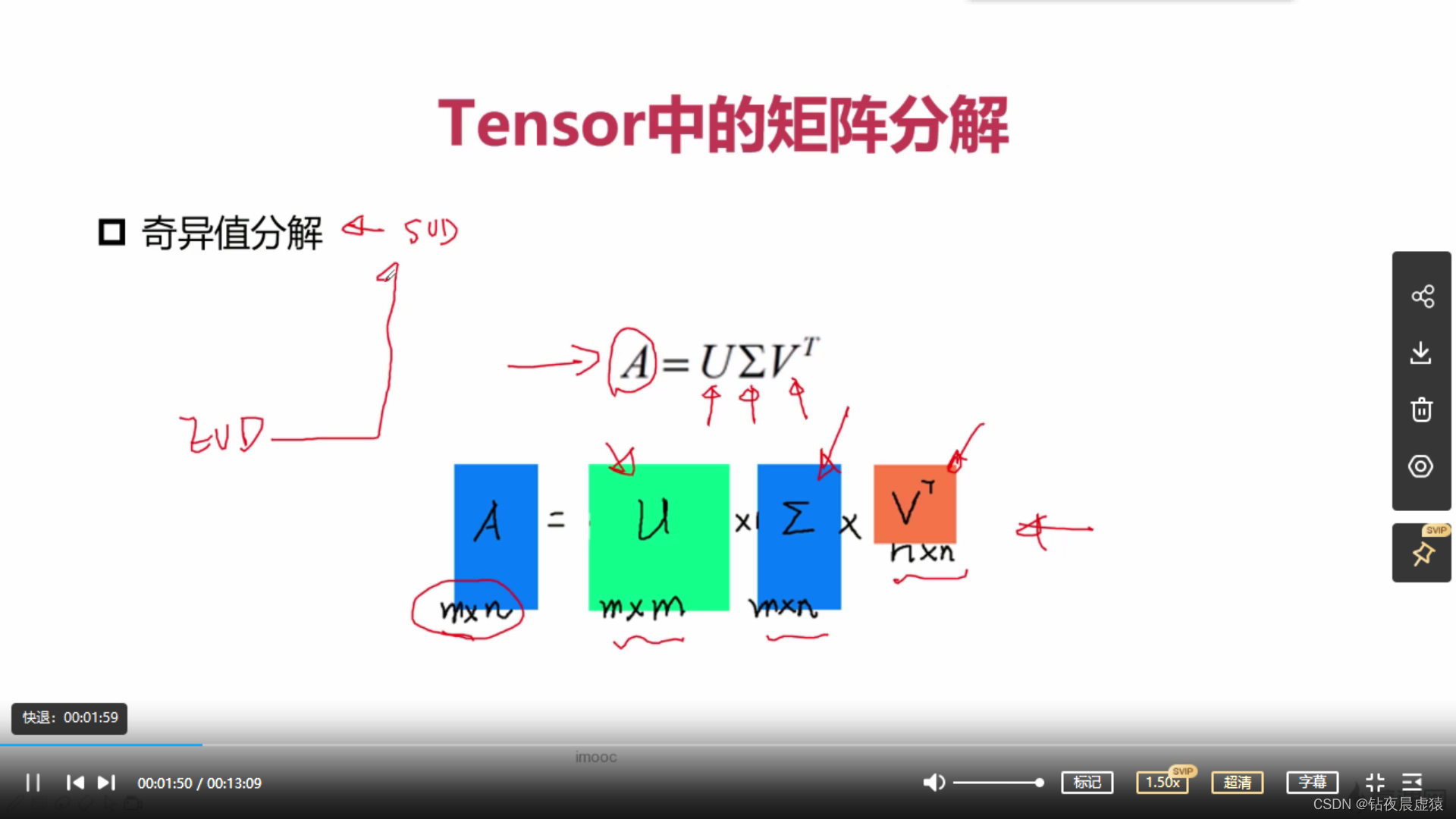

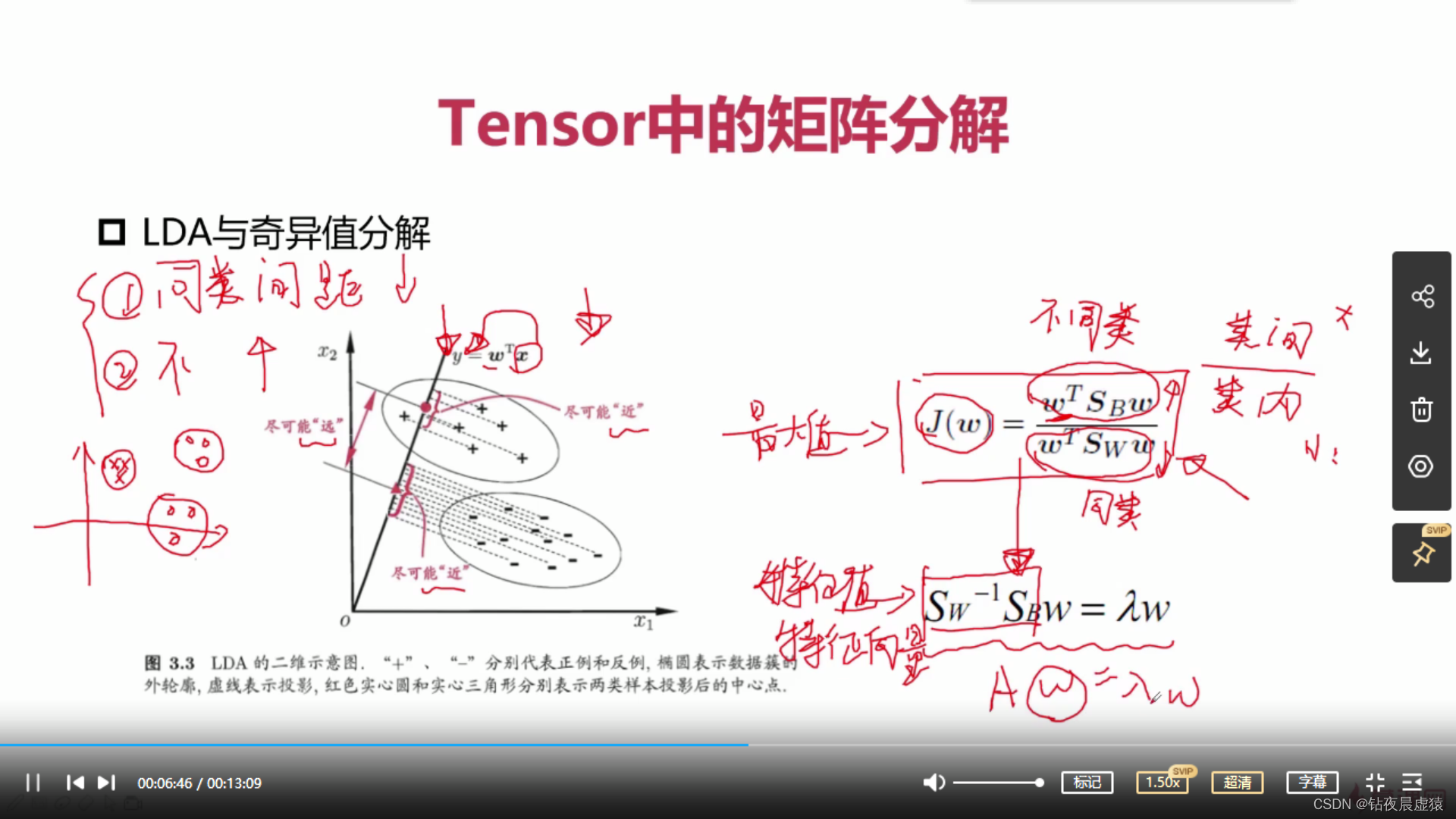

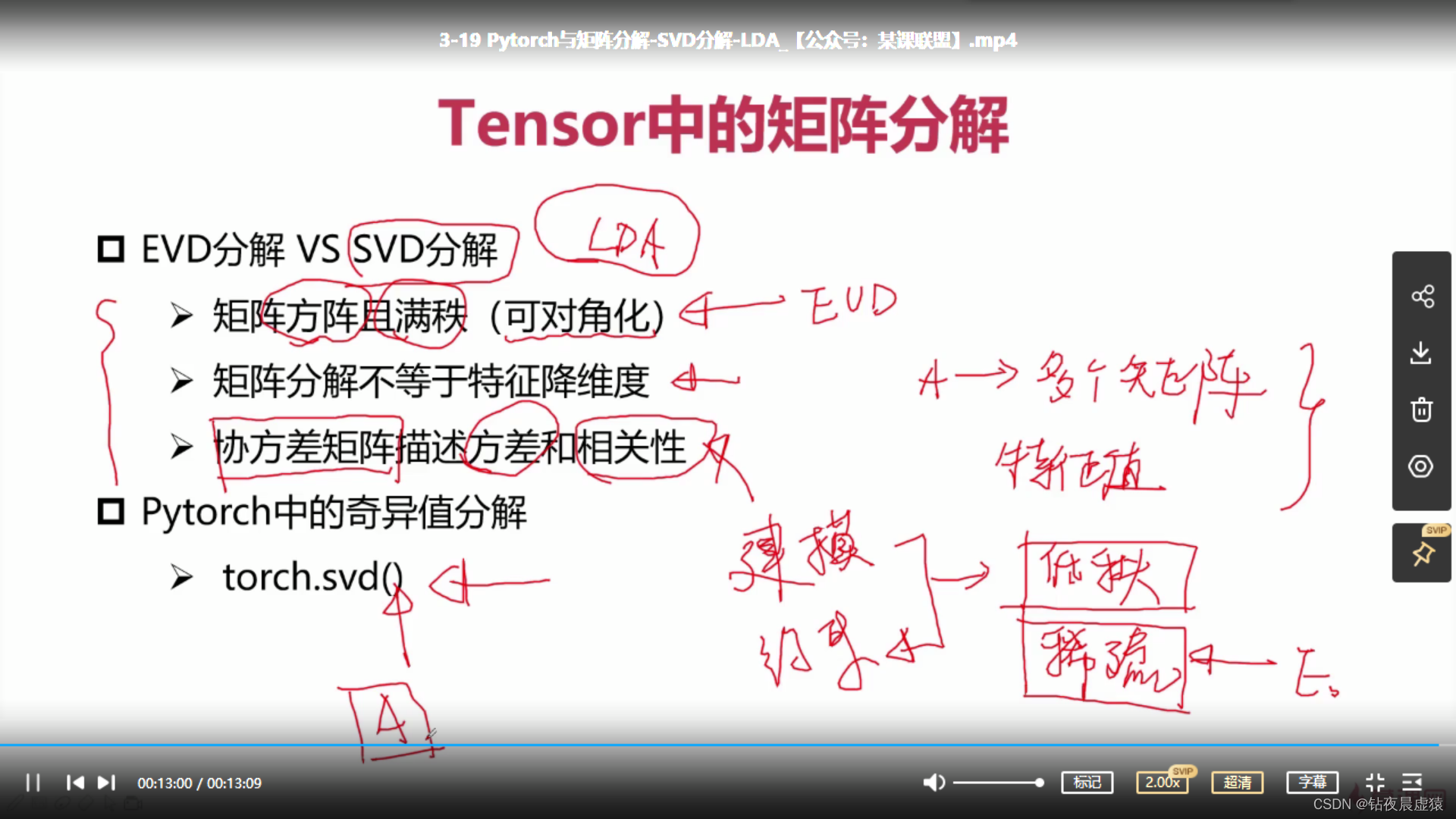

19.PyTorch与矩阵分解-SVD分解-LDA

20.PyTorch与张量裁剪

import torch

a = torch.rand(3, 3) * 10

print(a)

a = a.clamp(2, 5)

print(a)

tensor([[7.3781, 6.8925, 8.6554],

[6.8215, 0.9148, 4.5870],

[5.7040, 6.4257, 3.9602]])

tensor([[5.0000, 5.0000, 5.0000],

[5.0000, 2.0000, 4.5870],

[5.0000, 5.0000, 3.9602]])

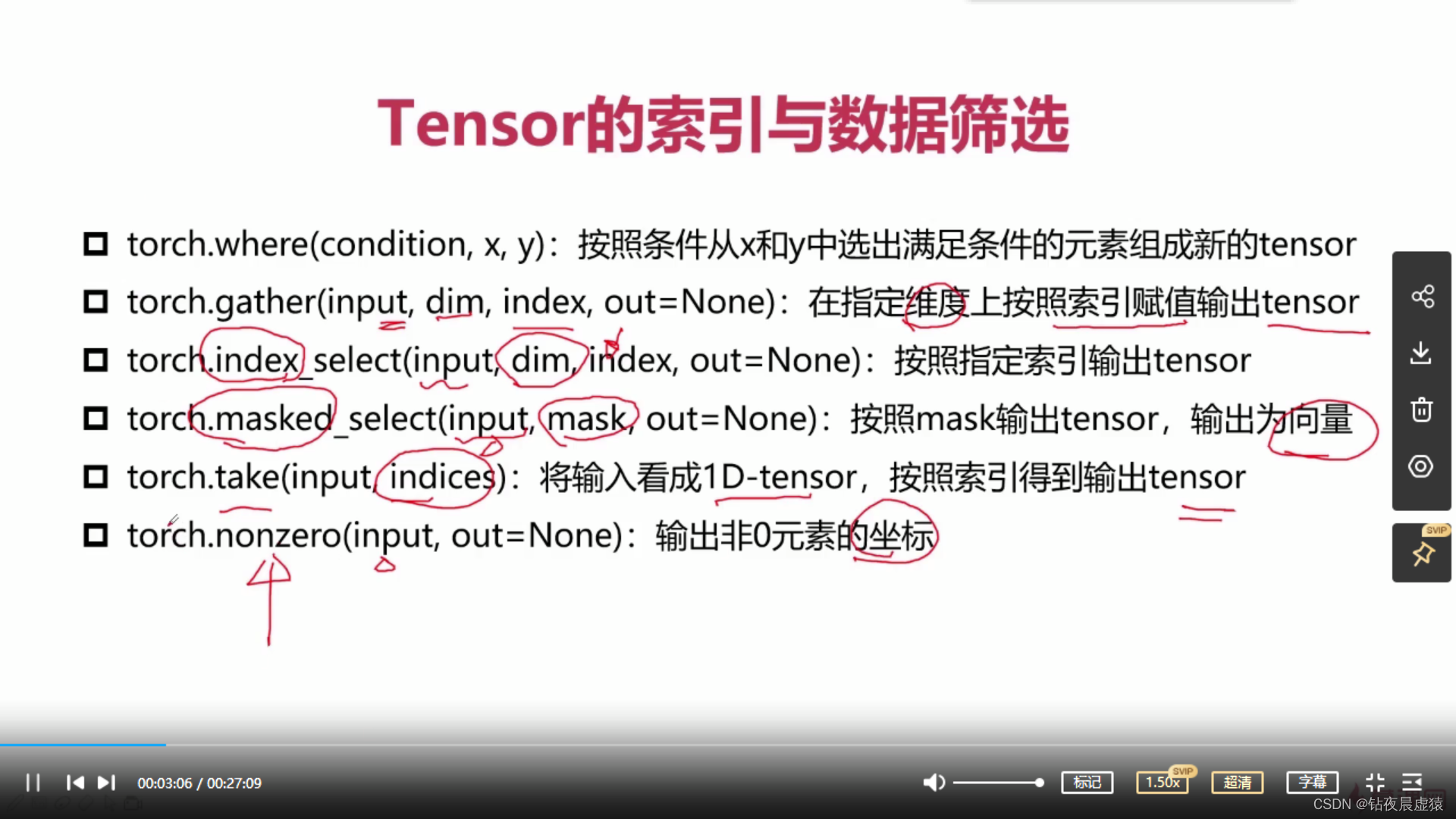

21.PyTorch与张量的索引与数据筛选

import torch

# torch.where

a = torch.rand(4, 4)

b = torch.rand(4, 4)

print(a)

print(b)

out = torch.where(a > 0.5, a, b)

print(out)

# torch.index_select

print("torch.index_select")

a = torch.rand(4, 4)

print(a)

out = torch.index_select(a, dim=0, index=torch.tensor([0, 3, 2]))

print(out, out.shape)

# torch.gather

print("torch.gather")

a = torch.linspace(1, 16, 16).view(4, 4)

print(a)

out = torch.gather(a, dim=0, index=torch.tensor([[0, 1, 1, 1],

[0, 1, 2, 2],

[0, 1, 3, 3]]))

print(out, out.shape)